[*]

Cloud-native infrastructure is the hardware and software that supports applications designed for the cloud. For compute infrastructure, traditionally the underlying hardware has been x86-based cloud instances that containers and other IaaS (Infrastructure-as-a-Service) applications are built on. In the last few years, developers had a choice in deploying their cloud-native applications on a multi-architecture infrastructure that provides better performance and scalability for their applications and ultimately better overall cost of ownership for their end customers.

In computing, CPU architecture reflects the various instruction sets that CPUs use to manage workloads, manipulate data, and turn algorithms into compiled binaries. Initially, cloud infrastructure was standardized around legacy architectures, such as the x86 instruction set (the 64-bit version is also referred to as AMD64, x86-64, or x64). Today, cloud providers and server vendors offer Arm-based platforms (also referred to as ARM64 or AArch64). For a broad set of cloud-native applications, the Arm architecture offers increased application performance and scalability. Transitioning workloads from legacy architectures to Arm also results in achieving better sustainability due to the energy efficiency of the Arm architecture. The general answer offers higher complete value of possession.

The 2 major architectures, x86 and Arm, take totally different approaches to efficiency and effectivity. Conventional x86 servers grew as an extension to PCs the place a variety of software program was run on a single laptop by a single consumer. They use symmetric multithreading (SMT) to extend efficiency. Arm servers are designed for cloud-native functions made up of microservices and containers and don’t use multithreading. As a substitute, Arm servers use greater core counts to extend efficiency. Designing for cloud-native functions leads to much less useful resource competition, constant efficiency, and higher safety in comparison with multithreading. It additionally means you don’t have to overprovision compute sources. The Arm structure additionally provides effectivity benefits leading to decrease energy consumption and elevated sustainability. The Arm structure has been utilized in greater than 270 billion Arm-based chips shipped to this point.

Due to these benefits, software program builders have embraced the chance to develop cloud-native functions and workloads for Arm-based platforms. This permits them to unlock the platform advantages of higher value efficiency and power effectivity. Arm-based {hardware} is obtainable by all main cloud suppliers—Amazon Net Providers (AWS) (Graviton), Microsoft Azure (Dpsv5), Google Cloud Platform (GCP) (T2A), and Oracle Cloud Infrastructure (OCI) (A1). AWS Graviton processors are custom-built by Amazon Net Providers utilizing 64-bit Arm Neoverse cores to ship one of the best price-performance on your cloud workloads working in Amazon EC2. Azure and GCP provide Arm-based digital machines within the cloud based mostly on Ampere Altra and Ampere One processors. Not too long ago, Azure additionally announced their own custom-built Arm-based processor called Cobalt 100.

The shift to multi-architecture infrastructure is pushed by a number of elements, together with the necessity for larger effectivity, value financial savings, and sustainability in cloud computing. To achieve these benefits, builders should observe multi-architecture greatest practices for software program improvement, add Arm structure assist to containers, and deploy these containers on hybrid Kubernetes clusters with x86 and Arm-based nodes.

All main OS distributions and languages assist the Arm structure, comparable to Java, .NET, C/C++, Go, Python, and Rust.

- Java applications are compiled to bytecode that may run on any JVM no matter underlying structure with out the necessity for recompilation. All the key flavors of Java can be found on Arm-based platforms together with OpenJDK, Amazon Corretto, and Oracle Java.

- .NET framework 5 and onwards assist Linux and ARM64/AArch64-based platforms. Every launch of .NET brings extra optimizations for Arm-based platforms.

- Go, Python, and Rust with their newest releases provide efficiency enhancements for varied functions.

On this constantly evolving world of containers and microservices, multi-architecture container photographs are the best strategy to deploy functions and conceal the underlying {hardware} structure. Constructing multi-architecture photographs is barely extra complicated in comparison with constructing single-architecture photographs. Docker offers two methods to create multi-architecture photographs: docker manifest and docker buildx.

- With docker manifest, you may construct every structure individually and be a part of them collectively right into a multi-architecture picture. That is barely extra concerned strategy that requires a great understanding of how manifest information work

- docker buildx makes it tremendous straightforward and environment friendly to construct multi-architectures with a single command. An instance of how docker buildx builds multi-architecture photographs is offered within the subsequent part.

One other essential side of a software program improvement lifecycle are steady integration and steady supply (CI/CD) instruments. CI/CD pipelines are essential in automating the construct, take a look at, and deployment of multi-architecture functions. You may construct your software code natively on Arm in a CI/CD pipeline. For instance, GitLab and GitHub have runners that assist constructing your software natively on Arm-based platforms. It’s also possible to use instruments like AWS CodeBuild and AWS Code Pipelines as your CI/CD instruments. If you have already got an x86-based pipeline, you can begin by making a separate pipeline with ARM64 construct and deployment targets. Determine if any unit exams are failing. Use blue-green deployments to stage your software and take a look at the performance. Alternatively, use canary-style deployments to host a small share of visitors on Arm-based deployment targets on your customers to attempt.

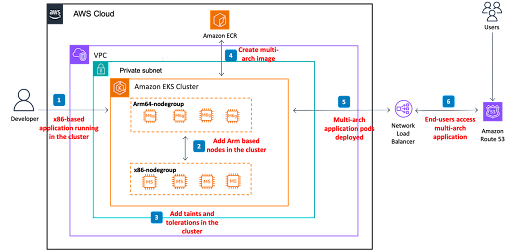

Let’s take a look at an instance of a cloud-native software and infrastructure stack and what it takes to make it multi-architecture. The determine beneath depicts a state of affairs of a Go-based net software deployed in an Amazon EKS cluster and high-level steps on methods to make it multi-architecture.

- Create a multi-architecture Amazon EKS cluster to run the x86/amd64 variations of the applying.

- Add Arm-based nodes to the cluster. Amazon EKS helps node teams of a number of architectures working in a single cluster. You may add Arm-based nodes to the cluster, instance EC2 situations are m7g and c7g. It will lead to a hybrid EKS cluster with each x86 and ARM64 nodes.

- Add taints and toleration within the cluster. When beginning with a number of architectures, you’ll should configure the Kubernetes Node Selector. A node taint (or a node selector) lets the Kubernetes scheduler know {that a} explicit node is designated for one structure solely. A toleration allows you to designate pods that can be utilized on tainted nodes. In a hybrid cluster setup with nodes from totally different architectures (x86 and ARM64), including a taint on the nodes avoids the opportunity of scheduling pods on incorrect structure.

- Create a multi-architecture docker file, construct a multi-arch docker picture, and push it to a container registry of your alternative – Docker registry, Amazon ECR, and so on.

- Deploy this multi-arch software picture within the hybrid EKS cluster with each x86 and Arm-based nodes.

- Customers entry the multi-architecture model of the applying utilizing the identical Load Balancer.

Let’s see how one can implement every of those steps in an instance challenge. You may observe this complete use case with an Arm Learning Path.

Create a multi-arch Amazon EKS cluster immediately through AWS console or use the next yaml file:

apiVersion: eksctl.io/v1alpha5

type: ClusterConfig

metadata:

identify: multi-arch-cluster

area: us-east-1

nodeGroups:

- identify: x86-node-group

instanceType: m5.giant

desiredCapacity: 2

volumeSize: 80

- identify: arm64-node-group

instanceType: m6g.giant

desiredCapacity: 2

volumeSize: 80

On executing the next command, it is best to see each architectures printed on the console:

kubectl get node -o jsonpath='{.objects[*].standing.nodeInfo.structure}’

So as to add taints and tolerations to the cluster use the next nodeSelector block and add it to the x86/amd64 or ARM64 model of the Docker file. It will be sure that the pods are scheduled on the proper structure.

nodeSelector:

kubernetes.io/arch: arm64For extra particulars, observe the Arm learning path talked about above.

Create a multi-architecture Docker file. For the instance software, you should utilize supply code information situated in the GitHub repository. It’s a Go-based net software that prints the structure of the Kubernetes node its working on. On this repo, let’s take a look at the Docker file beneath

ARG T

#

# Construct: 1st stage

#

FROM golang:1.21-alpine as builder

ARG TARCH

WORKDIR /app

COPY go.mod .

COPY hey.go .

RUN GOARCH=${TARCH} go construct -o /hey &&

apk add --update --no-cache file &&

file /hey

#

# Launch: 2nd stage

#

FROM ${T}alpine

WORKDIR /

COPY --from=builder /hey /hey

RUN apk add --update --no-cache file

CMD [ "/hello" ]

Observe the RUN assertion within the “Construct 1st stage” of the Docker file

RUN GOARCH=${TARCH}

Including this argument tells the Docker engine to construct a picture in keeping with the underlying structure. That’s it! That’s all it takes to transform this software to run on multi-architectures. Whereas we perceive that some complicated functions might require some extra work, with this instance you may see how straightforward it’s to begin with multi-architecture containers.

You need to use docker buildx to construct a multi-architecture picture and push it to the registry of your alternative.

docker buildx create –name multiarch –use –bootstrap

docker buildx construct -t <your-docker-repo-path>/multi-arch:newest –platform linux/amd64,linux/arm64 –push .

Deploy the multi-architecture Docker picture within the EKS (Kubernetes) cluster.

Let’s use the next Kubernetes yaml file to deploy this picture in our EKS cluster.

apiVersion: apps/v1

type: Deployment

metadata:

identify: multi-arch-deployment

labels:

app: hey

spec:

replicas: 6

selector:

matchLabels:

app: hey

tier: net

template:

metadata:

labels:

app: hey

tier: net

spec:

containers:

- identify: hey

picture: <your-docker-repo-path>/multi-arch:newest

imagePullPolicy: All the time

ports:

- containerPort: 8080

env:

- identify: NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

- identify: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.identify

sources:

requests:

cpu: 300m

To entry the multi-architecture model of the applying, execute the next in a loop to see a wide range of arm64 and amd64 messages.

for i in $(seq 1 10); do curl -w ‘n’ http://<external_ip>; accomplished

For software program builders attempting to study extra about technical greatest practices for constructing cloud-native functions on Arm, we offer a number of sources comparable to Learning Paths that present technical how-to info on all kinds of matters. Additionally, try our Arm Developer Hub, to achieve publicity to how different builders are approaching multi-architecture improvement. Right here you get entry to on-demand webinars, occasions, Discord channels, coaching, documentation, and extra.

[*]