Credit score for the title picture: Liu et al. (2021)

2021 noticed many thrilling advances in machine studying (ML) and pure language processing (NLP). On this put up, I’ll cowl the papers and analysis areas that I discovered most inspiring. I attempted to cowl the papers that I used to be conscious of however seemingly missed many related ones. Be at liberty to spotlight them in addition to ones that you just discovered inspiring within the feedback. I focus on the next highlights:

- Common Fashions

- Large Multi-task Studying

- Past the Transformer

- Prompting

- Environment friendly Strategies

- Benchmarking

- Conditional Picture Technology

- ML for Science

- Program Synthesis

- Bias

- Retrieval Augmentation

- Token-free Fashions

- Temporal Adaptation

- The Significance of Information

- Meta-learning

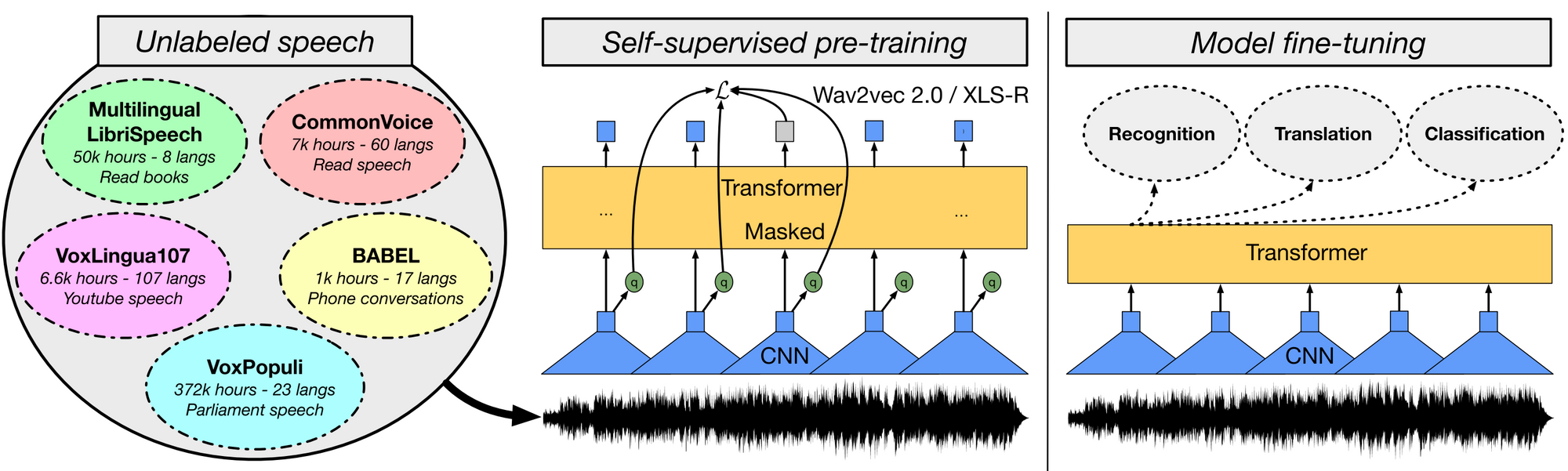

What occurred? 2021 noticed the continuation of the event of ever bigger pre-trained fashions. Pre-trained fashions have been utilized in many alternative domains and began to be thought of crucial for ML analysis . In laptop imaginative and prescient, supervised pre-trained fashions akin to Imaginative and prescient Transformer have been scaled up and self-supervised pre-trained fashions have began to match their efficiency . The latter have been scaled past the managed setting of ImageNet to random collections of photos . In speech, new fashions have been constructed primarily based on wav2vec 2.0 akin to W2v-BERT in addition to extra highly effective multilingual fashions akin to XLS-R . On the identical time, we noticed new unified pre-trained fashions for beforehand under-researched modality pairs akin to for movies and language in addition to speech and language . In imaginative and prescient and language, managed research shed new gentle on necessary elements of such multi-modal fashions . By framing completely different duties within the paradigm of language modelling, fashions have had nice success additionally in different domains akin to reinforcement studying and protein construction prediction . Given the noticed scaling behaviour of many of those fashions, it has turn out to be widespread to report efficiency at completely different parameter sizes. Nevertheless, will increase in pre-training efficiency don’t essentially translate to downstream settings .

Why is it necessary? Pre-trained fashions have been proven to generalize nicely to new duties in a given area or modality. They reveal sturdy few-shot studying behaviour and sturdy studying capabilities. As such, they’re a helpful constructing block for analysis advances and allow new sensible purposes.

What’s subsequent? We are going to undoubtedly see extra and even bigger pre-trained fashions developed sooner or later. On the identical time, we should always count on particular person fashions to carry out extra duties on the identical time. That is already the case in language the place fashions can carry out many duties by framing them in a standard text-to-text format. Equally, we’ll seemingly see picture and speech fashions that may carry out many widespread duties in a single mannequin. Lastly, we’ll see extra work that trains fashions for a number of modalities.

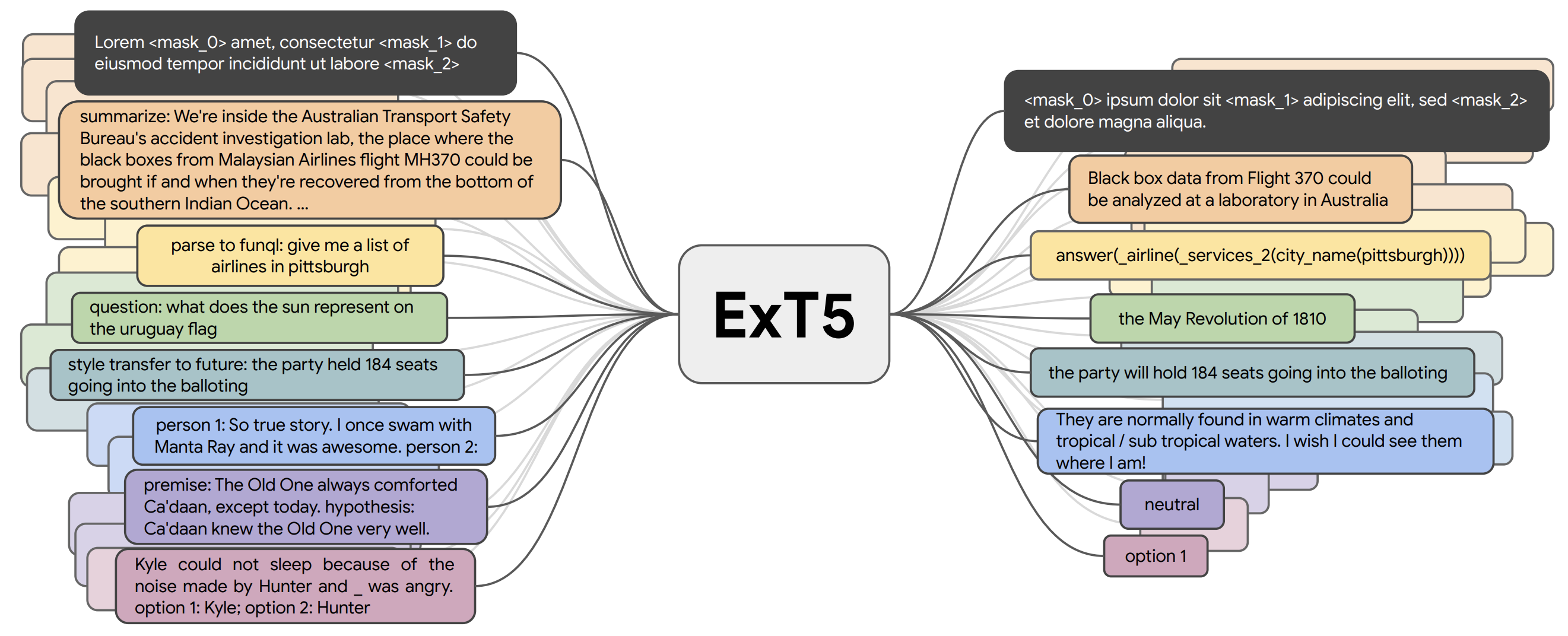

What occurred? Most pre-trained fashions within the earlier part are self-supervised. They often study from massive quantities of unlabelled information by way of an goal that doesn’t require express supervision. Nevertheless, for a lot of domains massive quantities of labelled information are already accessible, which can be utilized to study higher representations. To this point, multi-task fashions akin to T0 , FLAN , and ExT5 have been pre-trained on round 100 duties primarily for language. Such huge multi-task studying is carefully associated to meta-learning. Given entry to a various activity distribution , fashions can study to study various kinds of behaviour akin to find out how to do in-context studying .

Why is it necessary? Large multi-task studying is feasible attributable to the truth that many current fashions akin to T5 and GPT-3 use a text-to-text format. Fashions thus not require hand-engineered task-specific loss capabilities or task-specific layers with a purpose to successfully study throughout a number of duties. Such current approaches spotlight the good thing about combining self-supervised pre-training with supervised multi-task studying and reveal {that a} mixture of each results in fashions which can be extra common.

What’s subsequent? Given the provision and open-source nature of datasets in a unified format, we will think about a virtuous cycle the place newly created high-quality datasets are used to coach extra highly effective fashions on more and more various activity collections, which might then be used in-the-loop to create more difficult datasets.

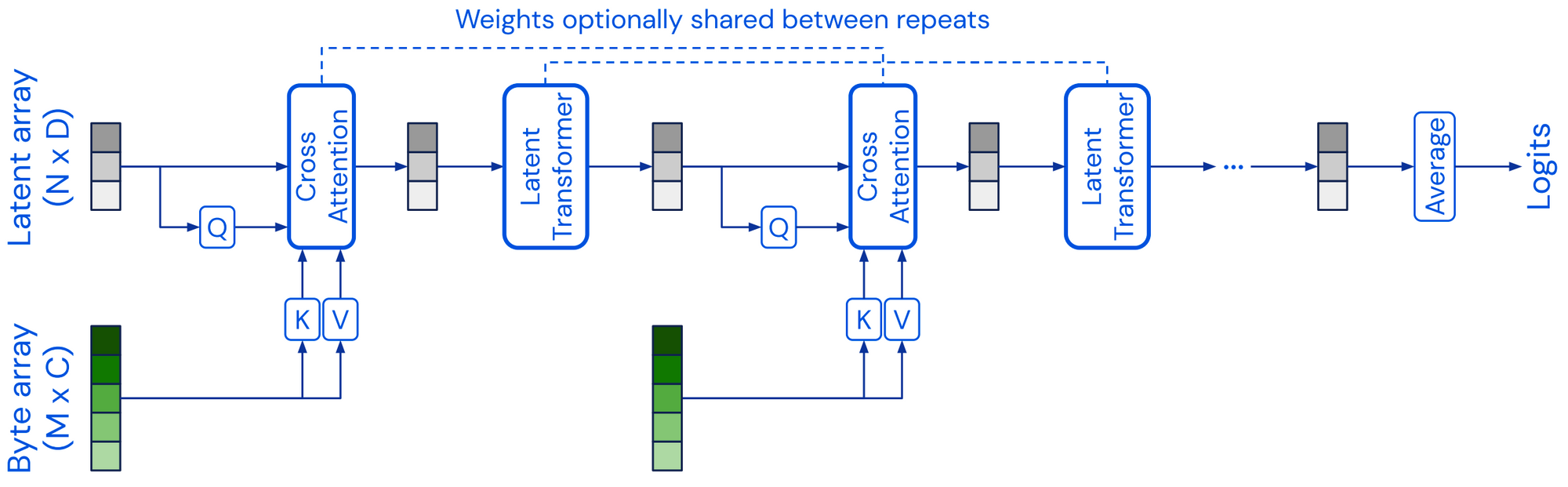

What occurred? Most pre-trained fashions mentioned within the earlier sections construct on the transformer structure . 2021 noticed the event of different mannequin architectures which can be viable options to the transformer. The Perceiver is a transformer-like structure that scales to very high-dimensional inputs through the use of a latent array of a hard and fast dimensionality as its base illustration and conditioning this on the enter by way of cross-attention. Perceiver IO prolonged the structure to additionally cope with structured output areas. Different fashions have tried to interchange the ubiquituous self-attention layer, most notably utilizing multilayer perceptrons (MLPs) akin to within the MLP-Mixer and gMLP . Alternatively, FNet makes use of 1D Fourier Transforms as an alternative of self-attention to combine data on the token stage. On the whole, it’s helpful to consider an structure as decoupled from the pre-training technique. If CNNs are pre-trained the identical manner as transformer fashions, they obtain aggressive efficiency on many NLP duties . Equally, utilizing different pre-training goals akin to ELECTRA-style pre-training could result in good points .

Why is it necessary? Analysis progresses by exploring many complementary or orthogonal instructions on the identical time. If most analysis focuses on a single structure, this may inevitably result in bias, blind spots, and missed alternatives. New fashions could deal with a number of the transformers’ limitations such because the computational complexity of consideration, its black-box nature, and order-agnosticity. As an example, neural extensions of generalized additive fashions supply significantly better interpretability in comparison with present fashions .

What’s subsequent? Whereas pre-trained transformers will seemingly proceed to be deployed as customary baselines for a lot of duties, we should always count on to see different architectures significantly in settings the place present fashions fail brief, akin to modeling long-range dependencies and high-dimensional inputs or the place interpretability and explainability are required.

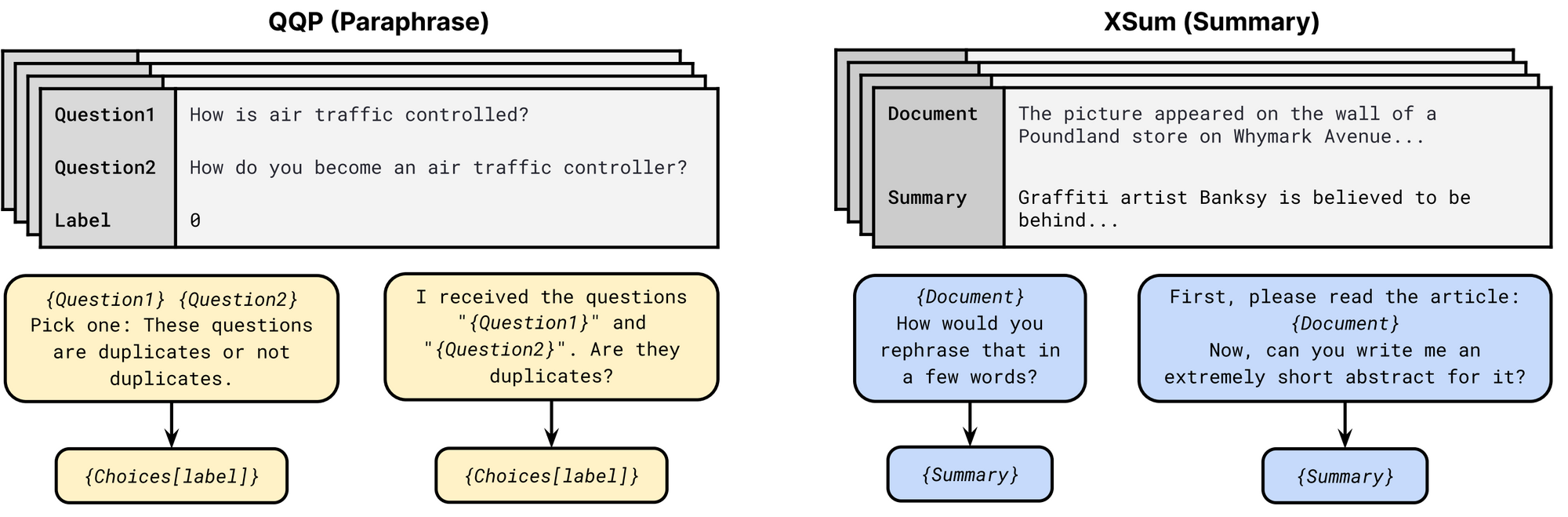

What occurred? Popularized by GPT-3 , prompting has emerged as a viable different enter format for NLP fashions. Prompts usually embody a sample that asks the mannequin to make a sure prediction and a verbalizer that converts the prediction to a category label. A number of approaches akin to PET, iPET , and AdaPET leverage prompts for few-shot studying. Prompts usually are not a silver bullet, nevertheless. Fashions’ efficiency varies drastically relying on the immediate and discovering the very best immediate nonetheless requires labeled examples . With a view to evaluate fashions reliably in a few-shot setting, new analysis procedures have been developed . Numerous prompts can be found as a part of the public pool of prompts (P3), enabling exploration of one of the best ways to make use of prompts. This survey supplies a wonderful overview of the overall analysis space.

Why is it necessary? A immediate can be utilized to encode task-specific data, which may be price as much as 3,500 labeled examples, relying on the duty . Prompts thus an allow a brand new option to incorporate skilled data into mannequin coaching, past manually labeling examples or defining labeling capabilities .

What’s subsequent? We’ve got solely scratched the floor of utilizing prompts to enhance mannequin studying. Prompts will turn out to be extra elaborate, as an illustration together with longer directions in addition to constructive and unfavourable examples and common heuristics. Prompts may be a extra pure option to incorporate pure language explanations into mannequin coaching.

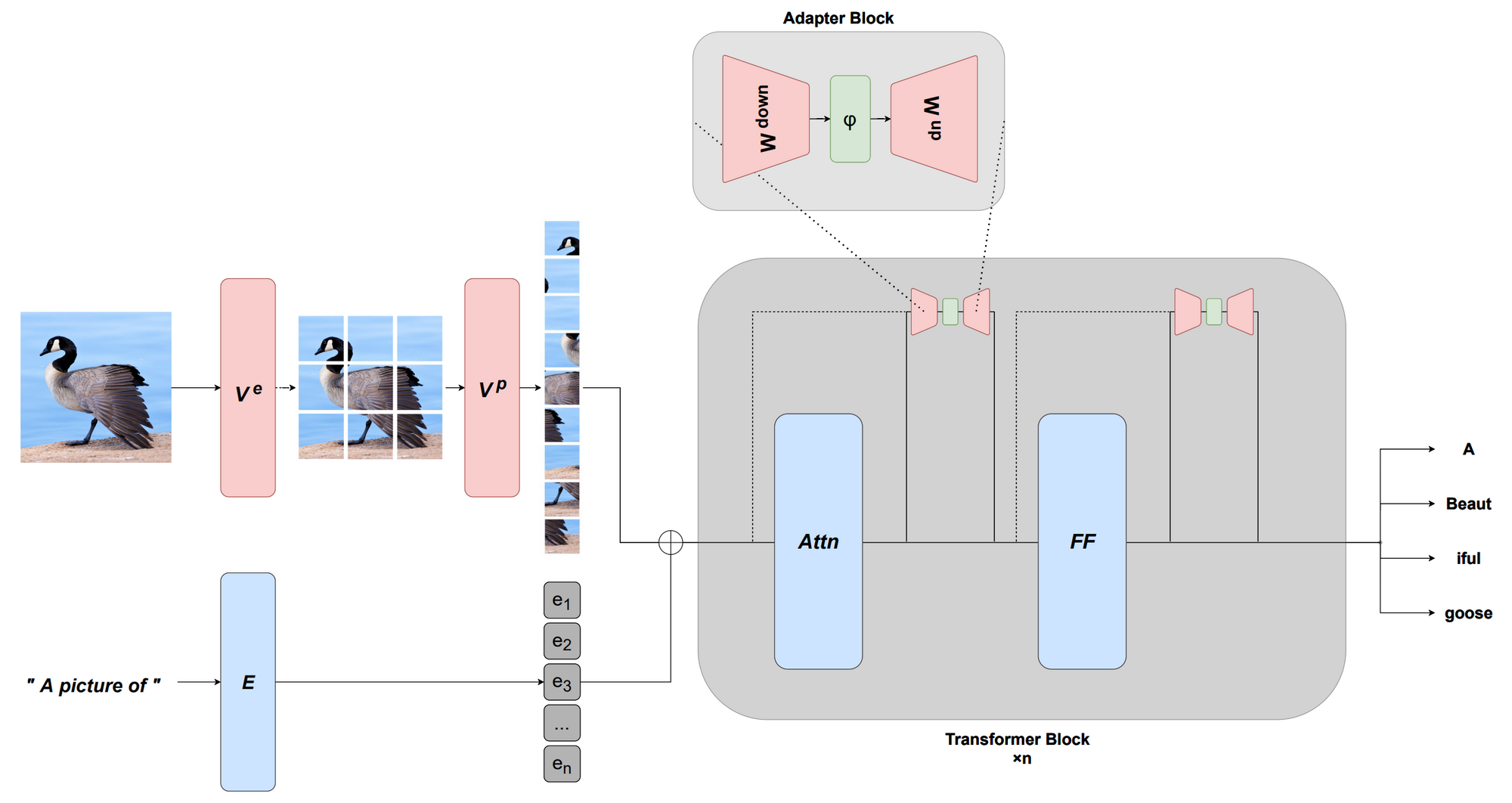

What occurred? A draw back of pre-trained fashions is that they’re usually very massive and sometimes inefficient to make use of in follow. 2021 introduced advances each in additional environment friendly architectures in addition to in additional environment friendly fine-tuning strategies. On the modeling facet, we noticed a number of extra environment friendly variations of self-attention . This survey supplies an summary of pre-2021 fashions. Present pre-trained fashions are so highly effective that they are often successfully conditioned by solely updating few parameters, which has led to the event of extra environment friendly fine-tuning approaches primarily based on steady prompts and adapters , amongst others. This functionality additionally allows adaptation to new modalities by studying an acceptable prefix or appropriate transformations . Different strategies akin to quantization for creating extra environment friendly optimizers in addition to sparsity have additionally been used.

Why is it necessary? Fashions usually are not helpful if they’re infeasible or prohibitively costly to run on customary {hardware}. Advances in effectivity will be certain that whereas fashions are rising bigger, they are going to be benefical and accessible to practicioners.

What’s subsequent? Environment friendly fashions and coaching strategies ought to turn out to be simpler to make use of and extra accessible. On the identical time, the group will develop simpler methods to interface with massive fashions and to effectively adapt, mix or modify them with out having to pre-train a brand new mannequin from scratch.

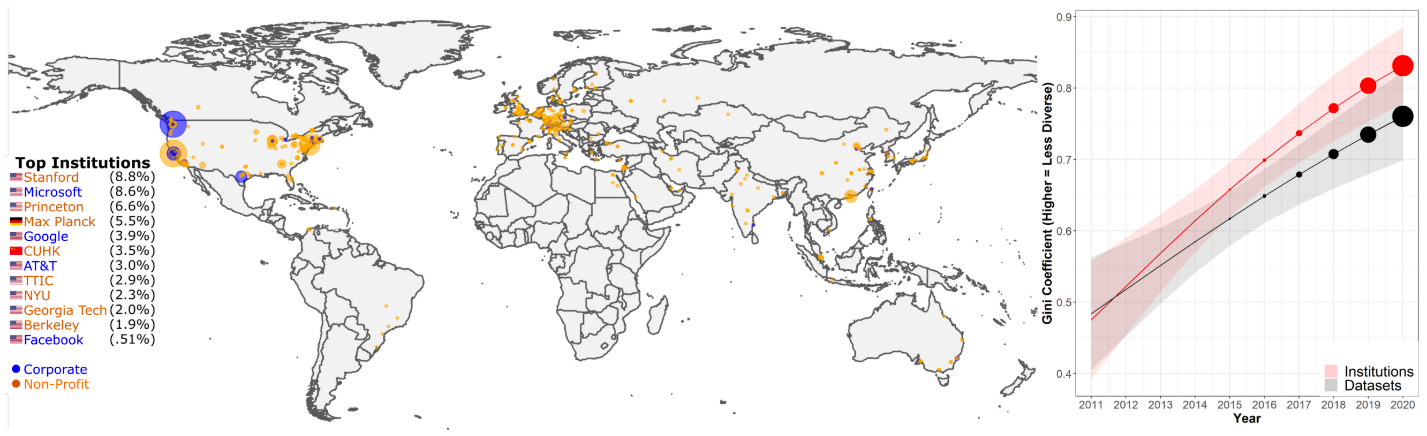

What occurred? The quickly enhancing capabilities of current ML and NLP fashions have outpaced the power of many benchmarks to measure them. On the identical time, communities consider on fewer and fewer benchmarks, which originate from a small variety of elite establishments . Consequently, 2021 noticed a lot dialogue of finest practices and methods by which we will reliably consider such fashions going ahead, which I cowl in this weblog put up. Notable leaderboard paradigms that emerged in 2021 within the NLP group are dynamic adversarial analysis , community-driven analysis the place group members collaborate on creating analysis datasets akin to BIG-bench, interactive fine-grained analysis throughout completely different error varieties , and multi-dimensional analysis that goes past evaluating fashions on a single efficiency metric . As well as, new benchmarks have been proposed for influential settings akin to few-shot analysis and cross-domain generalization . We additionally noticed new benchmarks centered on evaluating general-purpose pre-trained fashions, for particular modalities akin to speech and particular languages, as an illustration, Indonesian and Romanian , in addition to throughout modalities and in a multilingual setting . We additionally ought to pay extra consideration to analysis metrics. A machine translation (MT) meta-evaluation revealed that amongst 769 MT papers of the final decade, 74.3% solely used BLEU, regardless of 108 different metrics—typically with higher human correlation—having been proposed. Current efforts akin to GEM and bidimensional leaderboards thus suggest to guage fashions and strategies collectively.

Why is it necessary? Benchmarking and analysis are the linchpins of scientific progress in machine studying and NLP. With out correct and dependable benchmarks, it isn’t doable to inform whether or not we’re making real progress or overfitting to entrenched datasets and metrics.

What’s subsequent? Elevated consciousness round points with benchmarking ought to result in a extra thoughful design of latest datasets. Analysis of latest fashions also needs to focus much less on a single efficiency metric however take a number of dimensions into consideration, akin to a mannequin’s equity, effectivity, and robustness.

What occurred? Conditional picture technology, i.e., producing photos primarily based on a textual content description, noticed spectacular ends in 2021. An artwork scene emerged round the latest technology of generative fashions (see this weblog put up for an summary). Relatively than producing a picture immediately primarily based on a textual content enter as within the DALL-E mannequin , current approaches steer the output of a robust generative mannequin akin to VQ-GAN utilizing a joint image-and-text embedding mannequin akin to CLIP . Chance-based diffusion fashions, which step by step take away noise from a sign have emerged as highly effective new generative fashions that may outperform GANs . By guiding their outputs primarily based on textual content inputs, current fashions are approaching photorealistic picture high quality . Such fashions are additionally significantly good at inpainting and might modify areas of a picture primarily based on an outline.

Why is it necessary? Computerized technology of top quality photos that may be guided by customers opens a variety of creative and industrial purposes, from the automated design of visible property, model-assisted prototyping and design, personalization, and so on.

What’s subsequent? Sampling from current diffusion-based fashions is way slower in comparison with their GAN-based counterparts. These fashions require enhancements in effectivity to make them helpful for real-world purposes. This space additionally requires extra analysis in human-computer interplay, to establish the very best methods and purposes the place such fashions can help people.

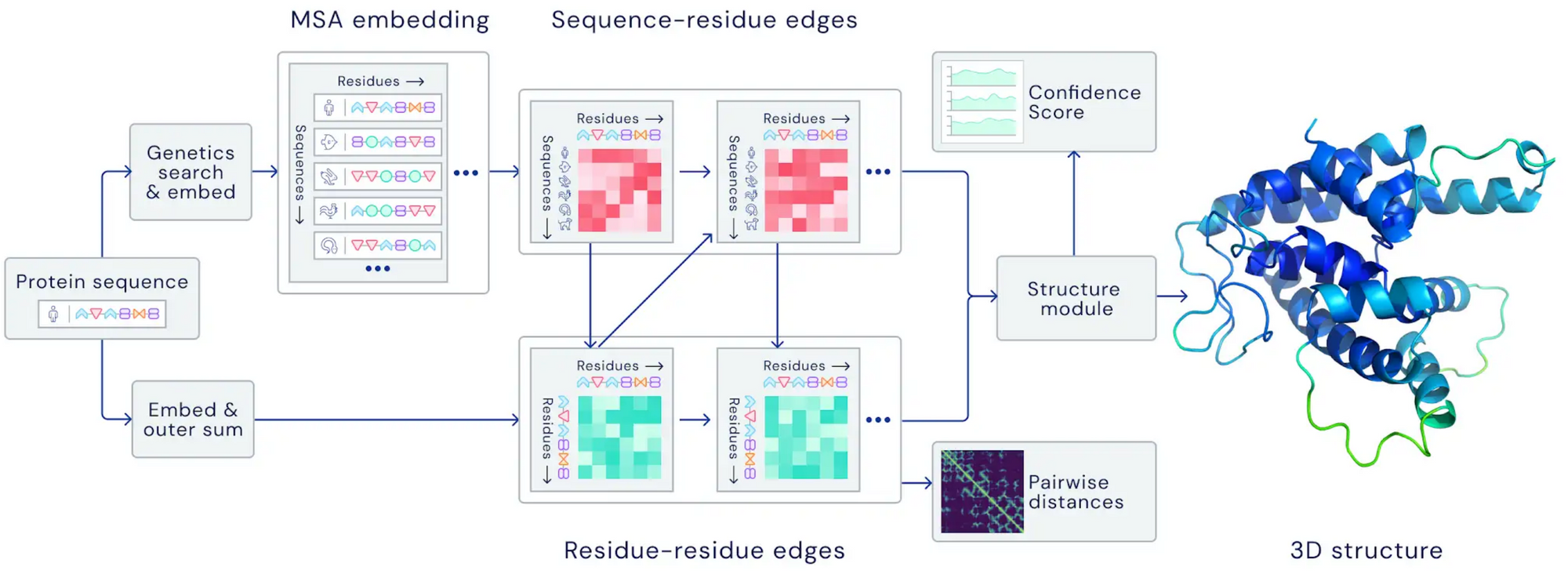

What occurred? 2021 noticed a number of breakthroughs in ML utilized to advance the pure sciences. In meteorology, advances in precipitation nowcasting and forecasting led to substantial enhancements in forecast accuracy. In each circumstances, fashions outperformed state-of-the-art physics-based forecast fashions. In biology, AlphaFold 2.0 managed to foretell the construction of proteins with unprecedented accuracy, even in circumstances the place no related construction is thought . In arithmetic, ML was proven to have the ability to information the instinct of mathematicians with a purpose to uncover new connections and algorithms . Transformer fashions have additionally been proven to be able to studying mathematical properties of differential programs akin to native stability when skilled on enough quantities of information .

Why is it necessary? Utilizing ML for advancing our understanding and purposes in pure sciences is certainly one of its most impactful purposes. Utilizing highly effective ML strategies allows each new purposes and might vastly pace up present ones akin to drug design.

What’s subsequent? Utilizing fashions in-the-loop to help researchers within the discovery and growth of latest advances is a very compelling course. It requires each the event of highly effective fashions in addition to work on interactive machine studying and human-computer interplay.

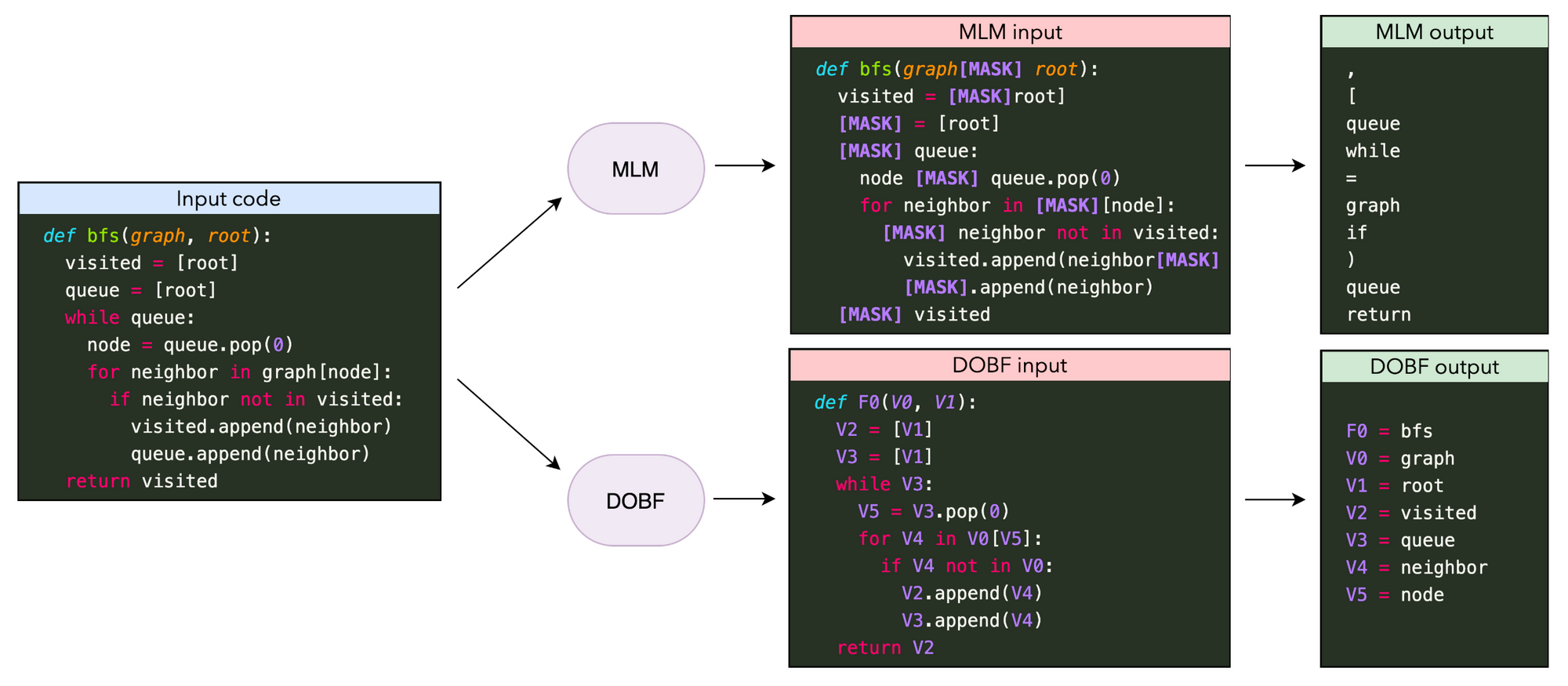

What occurred? One of the crucial notable purposes of huge language fashions this yr was code technology, which noticed with Codex its first integration into a significant product as a part of GitHub Copilot. Different advances in pre-training fashions ranged from higher pre-training goals to scaling experiments . Producing complicated and long-form packages remains to be a problem for present fashions, nevertheless. An attention-grabbing associated course is studying to execute or mannequin packages, which may be improved by performing multi-step computation the place intermediate computation steps are recorded in a “scratchpad” .

Why is it necessary? With the ability to mechanically synthesize complicated packages is beneficial for all kinds of purposes akin to supporting software program engineers.

What’s subsequent? It’s nonetheless an open query how a lot code technology fashions enhance the workflow of software program engineers in follow . With a view to be actually useful, such fashions—equally to dialogue fashions—want to have the ability to replace their predictions primarily based on new data and must take the native and international context into consideration.

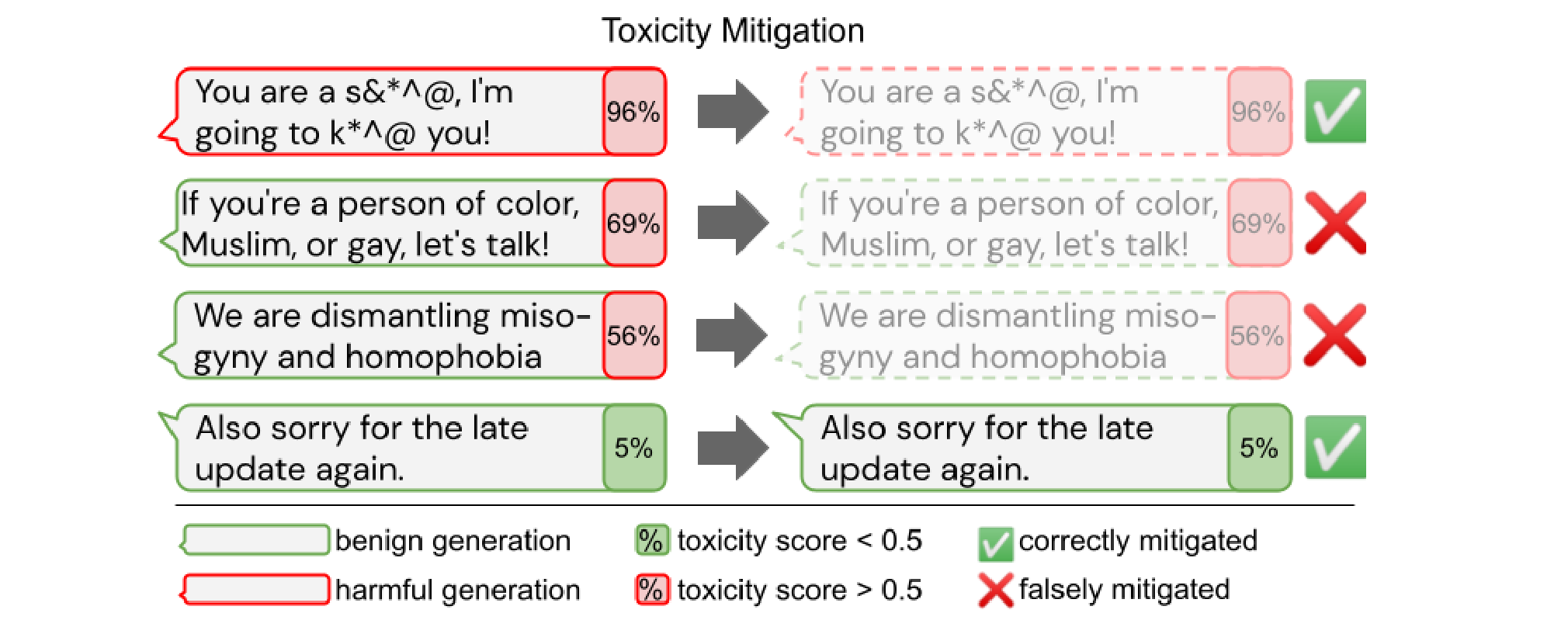

What occurred? Given the potential impression of huge pre-trained fashions, it’s essential that they don’t comprise dangerous biases, usually are not misused to generate dangerous content material, and are utilized in a sustainable method. A number of evaluations spotlight the potential dangers of such fashions. Bias has been investigated with regard to protected attributes akin to gender, explicit ethnic teams, and political leaning . Eradicating bias from fashions akin to toxicity, nevertheless, comes with trade-offs and might result in diminished protection for texts about and authored by marginalized teams .

Why is it necessary? With a view to use fashions in real-world purposes, they need to not exhibit any dangerous bias and never discriminate in opposition to any group. Growing a greater understanding of the biases of present fashions and find out how to take away them is thus essential for enabling secure and accountable deployment of ML fashions.

What’s subsequent? Bias has to date been largely explored in English and in pre-trained fashions and for particular textual content technology or classification purposes. Given the meant use and lifecycle of such fashions, we also needs to intention to establish and mitigate bias in a multilingual setting, with regard to the mix of various modalities, and at completely different phases of a pre-trained mannequin’s utilization—after pre-training, after fine-tuning, and at take a look at time.

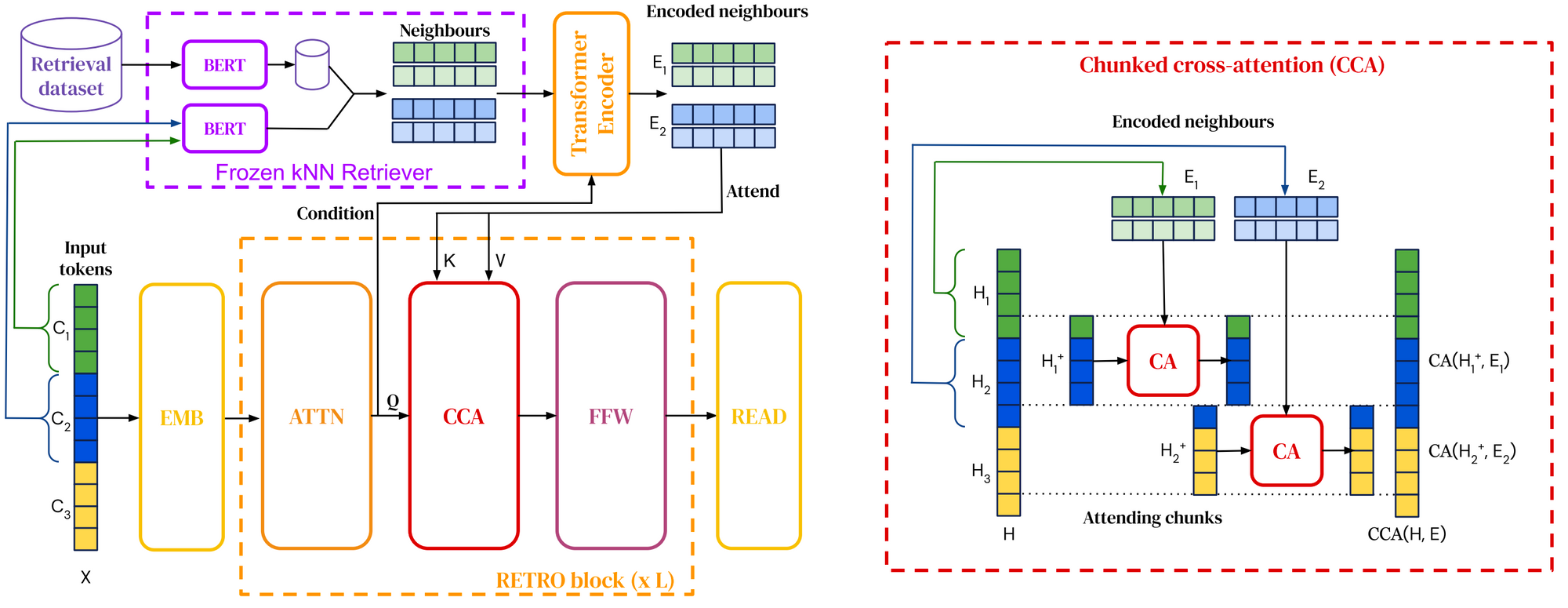

What occurred? Retrieval-augmented language fashions, which combine retrieval into pre-training and downstream utilization, have already featured in my highlights of 2020. In 2021, retrieval corpora have been scaled as much as a trillion tokens and fashions have been outfitted with the power to question the online for answering questions . We’ve got additionally seen new methods to combine retrieval into pre-trained language fashions .

Why is it necessary? Retrieval augmentation allows fashions to be far more parameter-efficient as they should retailer much less data of their parameters and might as an alternative retrieve it. It additionally allows efficient area adaptation by merely updating the info used for retrieval .

What’s subsequent? We’d see completely different types of retrieval to leverage completely different varieties of data akin to widespread sense data, factual relations, linguistic data, and so on. Retrieval augmentation may be mixed with extra structured types of data retrieval, akin to strategies from data base inhabitants and open data extraction.

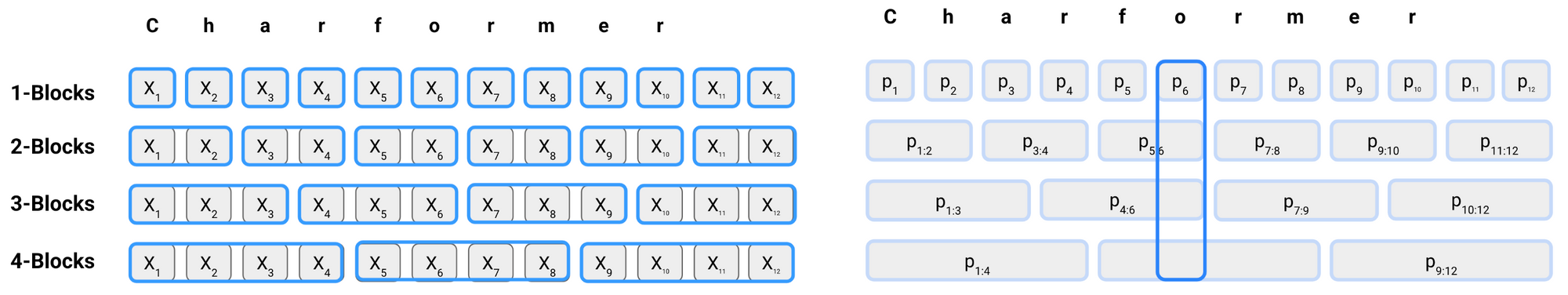

What occurred? 2021 noticed the emergence of latest token-free strategies that immediately eat a sequence of characters . These fashions have been demonstrated to outperform multilingual fashions and carry out significantly nicely on non-standard language. They’re thus a promising different to the entrenched subword-based transformer fashions (see this article for a protection of those ‘Char Wars’).

Why is it necessary? Since pre-trained language fashions like BERT, a textual content consisting of tokenized subwords has turn out to be the usual enter format in NLP. Nevertheless, subword tokenization has been proven to carry out poorly on noisy enter, akin to on typos or spelling variations widespread on social media, and on sure forms of morphology. As well as, it imposes a dependence on the tokenization, which may result in a mismatch when adapting a mannequin to new information.

What’s subsequent? Because of their elevated flexibility, token-free fashions are higher capable of mannequin morphology and will generalize higher to new phrases and language change. It’s nonetheless unclear, nevertheless, how they fare in comparison with subword-based strategies on various kinds of morphological or phrase formation processes and what trade-offs these fashions make.

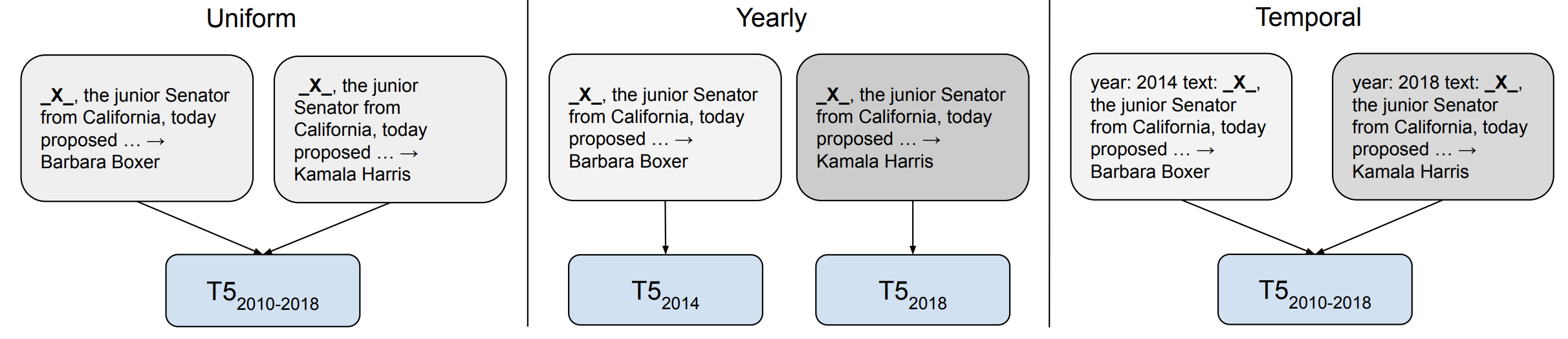

What occurred? Fashions are biased in some ways primarily based on the info that they’re skilled on. Considered one of these biases that has obtained rising consideration in 2021 is a bias relating to the timeframe of the info the fashions have been skilled on. Provided that language constantly evolves and new phrases enter the discourse, fashions which can be skilled on outdated information have been proven to generalize comparatively poorly . When temporal adaptation is beneficial, nevertheless, could depend upon the downstream activity. As an example, it could be much less useful for duties the place event-driven modifications in language use usually are not related for activity efficiency .

Why is it necessary? Temporal adaptation is especially necessary for query answering the place solutions to a query could change relying on when the query was requested .

What’s subsequent? Growing strategies that may adapt to new timeframes requires transferring away from the static pre-train–fine-tune setting and requires environment friendly methods to replace the data of pre-trained fashions. Each environment friendly strategies in addition to retrieval augmentation are helpful on this regard. It additionally requires growing fashions for which the enter doesn’t exist in a vacuum however is grounded to extra-linguistic context and the actual world. For extra work on this subject, take a look at the EvoNLP workshop at EMNLP 2022.

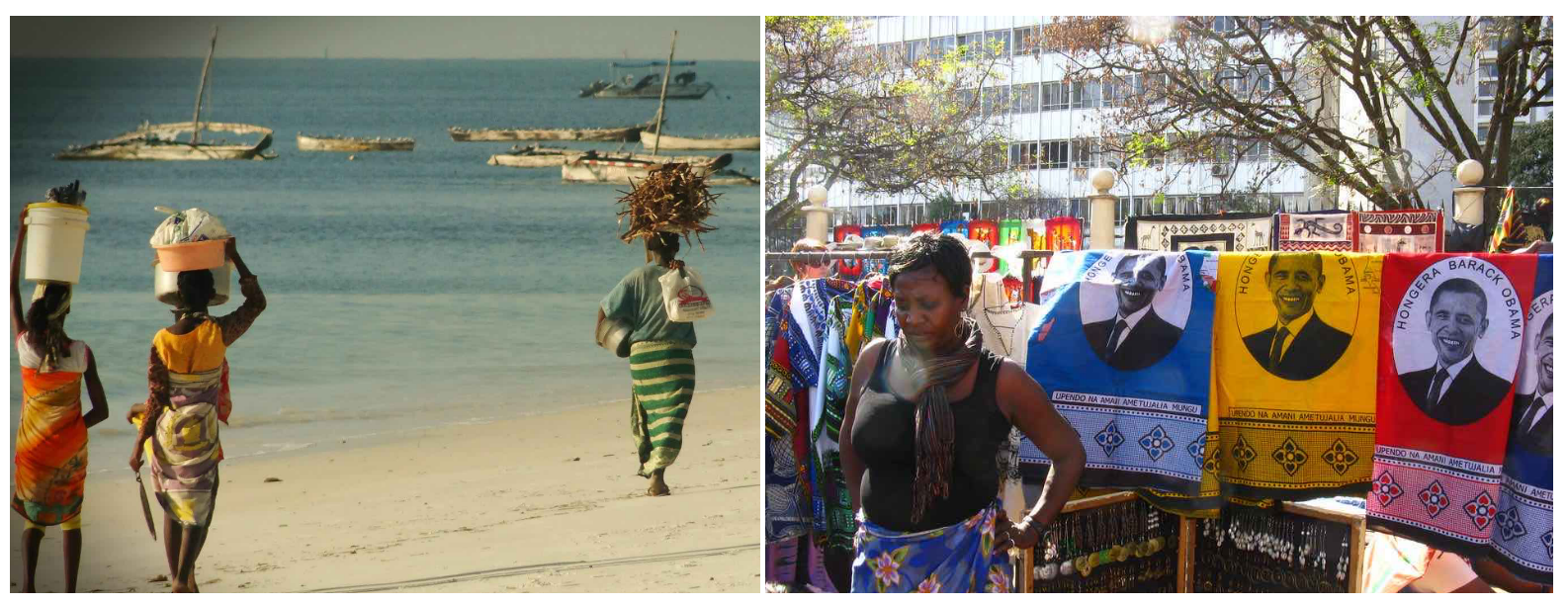

What occurred? Information has lengthy been a crucial ingredient for ML however is usually overshadowed by advances in modelling. Given the significance of information for scaling up fashions, nevertheless, consideration is slowly shifting from model-centric to data-centric approaches. Vital matters embody find out how to construct and keep new datasets effectively and the way to make sure information high quality (see the Information-centric AI workshop at NeurIPS 2021 for an summary). Specifically, large-scale datasets utilized by pre-trained fashions got here beneath scrutiny this yr together with multi-modal datasets in addition to English and multilingual textual content corpora . Such an evaluation can inform the design of extra consultant assets akin to MaRVL for multi-modal reasoning.

Why is it necessary? Information is critically necessary for coaching large-scale ML fashions and a key think about how fashions purchase new data. As fashions are scaled up, making certain information high quality at scale turns into more difficult.

What’s subsequent? We presently lack finest practices and principled strategies relating to find out how to effectively construct datasets for various duties, reliably guarantee information high quality, and so on. Additionally it is nonetheless poorly understood how information interacts with a mannequin’s studying and the way the info shapes a mannequin’s biases. As an example, coaching information filtering could have unfavourable results on a language mannequin’s protection of marginalized teams .

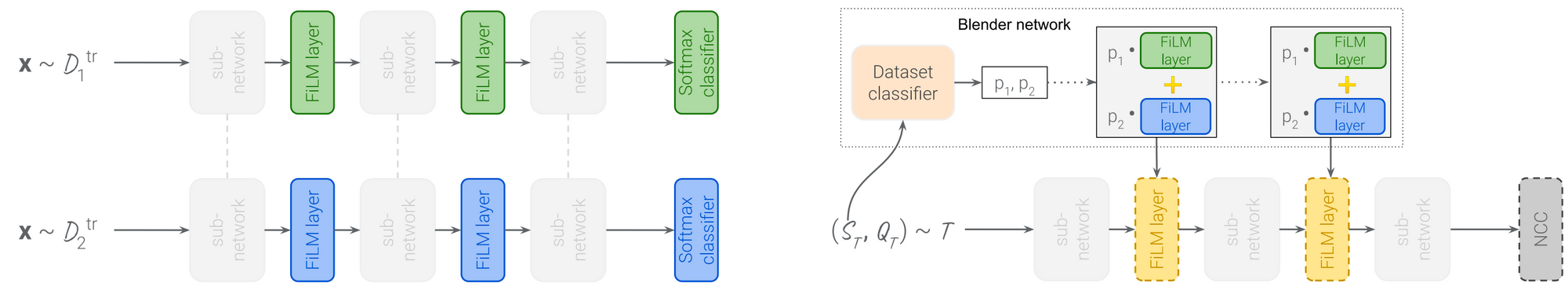

What occurred? Meta-learning and switch studying, regardless of sharing the widespread purpose of few-shot studying, have been studied largely in distinct communitites. On a brand new benchmark , large-scale switch studying strategies outperform meta-learning-based approaches. A promising course is to scale up meta-learning strategies, which, mixed with extra memory-efficient coaching strategies, can enhance the efficiency of meta-learning fashions on real-world benchmarks . Meta-learning strategies can be mixed with environment friendly adaptation strategies akin to FiLM layers to adapt a common mannequin successfully to new datasets .

Why is it necessary? Meta-learning is a crucial paradigm however has fallen in need of yielding state-of-the-art outcomes on customary benchmarks that aren’t designed with meta-learning programs in thoughts. Bringing meta-learning and switch studying communities nearer collectively could result in extra sensible meta-learnig strategies which can be helpful in real-world purposes.

What’s subsequent? Meta-learning may be significantly helpful when mixed with the massive variety of pure duties accessible for huge multi-task studying. Meta-learning can even assist enhance prompting by studying find out how to design or use prompts primarily based on the massive variety of accessible prompts.

Quotation

For attribution in educational contexts or books, please cite this work as:

Sebastian Ruder, "ML and NLP Analysis Highlights of 2021". http://ruder.io/ml-highlights-2021/, 2022.

BibTeX quotation:

@misc{ruder2022mlhighlights,

writer = {Ruder, Sebastian},

title = {{ML and NLP Analysis Highlights of 2021}},

yr = {2022},

howpublished = {url{http://ruder.io/ml-highlights-2021/}},

}

Credit

Due to Eleni Triantafillou and Dani Yogatama for ideas and solutions.