ACL 2022 came about in Dublin from twenty second–twenty seventh Might 2022. This was my first in-person convention since ACL 2019. That is additionally my first convention highlights submit since NAACL 2019. With 1032 accepted papers (604 lengthy, 97 quick, 331 in Findings), this submit can solely provide a glimpse of the various analysis offered on the convention—biased in direction of my analysis pursuits.

Listed here are the themes that had been most noticeable for me throughout the convention program:

Listed here are highlights of different convention attendees:

For those who attended ACL, I encourage you to mirror on and write up your impressions of the convention (ship me a message and I’ll hyperlink them right here).

Language range and multimodality

ACL 2022 had a theme observe on the subject of “Language Variety: from Low-Useful resource to Endangered Languages”. Past the superb papers within the observe, language range additionally permeated different components of the convention. Steven Chicken hosted a panel on language range that includes researchers talking and finding out under-represented languages (slides). The panelists shared their experiences and mentioned interlingual energy dynamics, amongst different subjects. Additionally they made sensible options to encourage extra work on such languages: creating information assets; establishing a convention observe for work on low-resource and endangered languages; and inspiring researchers to use their methods to low-resource language information. Additionally they talked about a constructive growth, that researchers have gotten extra conscious of the worth of high-quality datasets. General, the panelists emphasised that working with such languages requires respect—in direction of the audio system, the tradition, and the languages themselves.

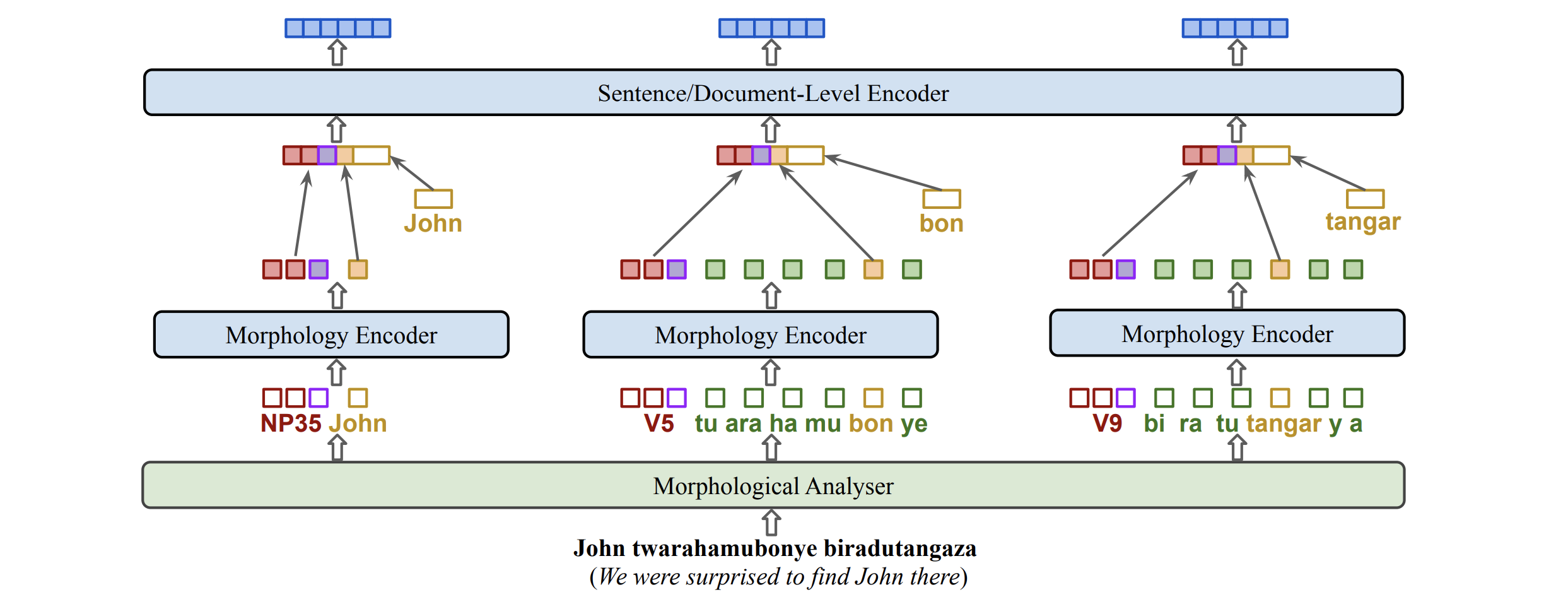

Endangered languages had been additionally the main target of the Compute-EL workshop. Within the awards ceremony, the finest linguistic perception paper proposed KinyaBERT, a pre-trained mannequin for Kinyarwanda that leverages a morphological analyzer. The finest theme paper developed speech synthesis fashions for 3 Canadian indigenous languages. The latter is an instance of how multimodal approaches can profit language range.

Different multimodal papers leveraged cellphone representations to enhance NER efficiency in Swahili and Kinyarwanda (Leong & Whitenack). For low-resource text-to-speech, Lux & Vu make use of articulatory options resembling place (e.g., frontness of the tongue) and class (e.g., voicedness), which generalize higher to unseen phonemes. Some work additionally explored new multimodal functions resembling detecting fingerspelling in American signal language (Shi et al.) or translating songs for tonal languages (Guo et al.).

The Multilingual Multimodal workshop hosted a shared activity on multilingual visually grounded reasoning on the MaRVL dataset. Seeing the emergence of such multilingual multimodal approaches is especially encouraging as it’s an enchancment over the earlier 12 months’s ACL the place multimodal approaches primarily handled English (primarily based on an evaluation of “multi-dimensional” NLP analysis we did for an ACL 2022 Findings paper).

In an invited discuss (slides), I emphasised multimodality along with three different challenges in direction of scaling NLP methods to the following 1,000 languages: computational effectivity, real-world analysis, and language varieties. Multimodality can also be on the coronary heart of the ACL 2022 D&I Particular Initiative “60-60 Globalization through localisation” introduced by Mona Diab. The initiative focuses on making Computational Linguistics (CL) analysis accessible in 60 languages and throughout all modalities, together with textual content/speech/signal language translation, closed captioning, and dubbing. One other helpful side of the initiative is the curation of the most typical CL phrases and their translation into 60 languages. The unavailability of correct scientific expressions poses a barrier to entry in lots of languages (see this associated Masakhane venture to decolonise science). The CL neighborhood is effectively positioned to advance the accessibility of scientific content material and I’m excited to see the progress of this grassroots initiative.

Beneath-represented languages sometimes have little textual content information out there. Two tutorials centered on making use of fashions to such low-resource settings. The tutorial on studying with restricted textual content information mentioned information augmentation, semi-supervised studying, and functions to multilinguality whereas the tutorial on zero-shot and few-shot NLP with pre-trained language fashions lined prompting, in-context studying, gradient-based LM activity adaptation, amongst others.

Learn how to optimally symbolize tokens throughout completely different languages is an open drawback. The convention program featured a number of new approaches to beat this problem. KinyaBERT (Nzeyimana & Rubungo) leveraged a morphological phrase segmentation strategy. Equally, Hofmann et al. suggest a technique that goals to protect the morphological construction of phrases throughout tokenization. The algorithm tokenizes a phrase by figuring out its longest substring within the vocabulary after which recursing on the remaining string till a sure variety of recursive calls.

Fairly than selecting subwords that happen regularly within the multilingual pre-training information (which biases the mannequin in direction of high-resource languages), Patil et al. suggest a technique that prefers subwords which are shared throughout a number of languages. Each CANINE (Clark et al.) and ByT5 (Xue et al.) put off tokenization utterly and function straight on bytes.

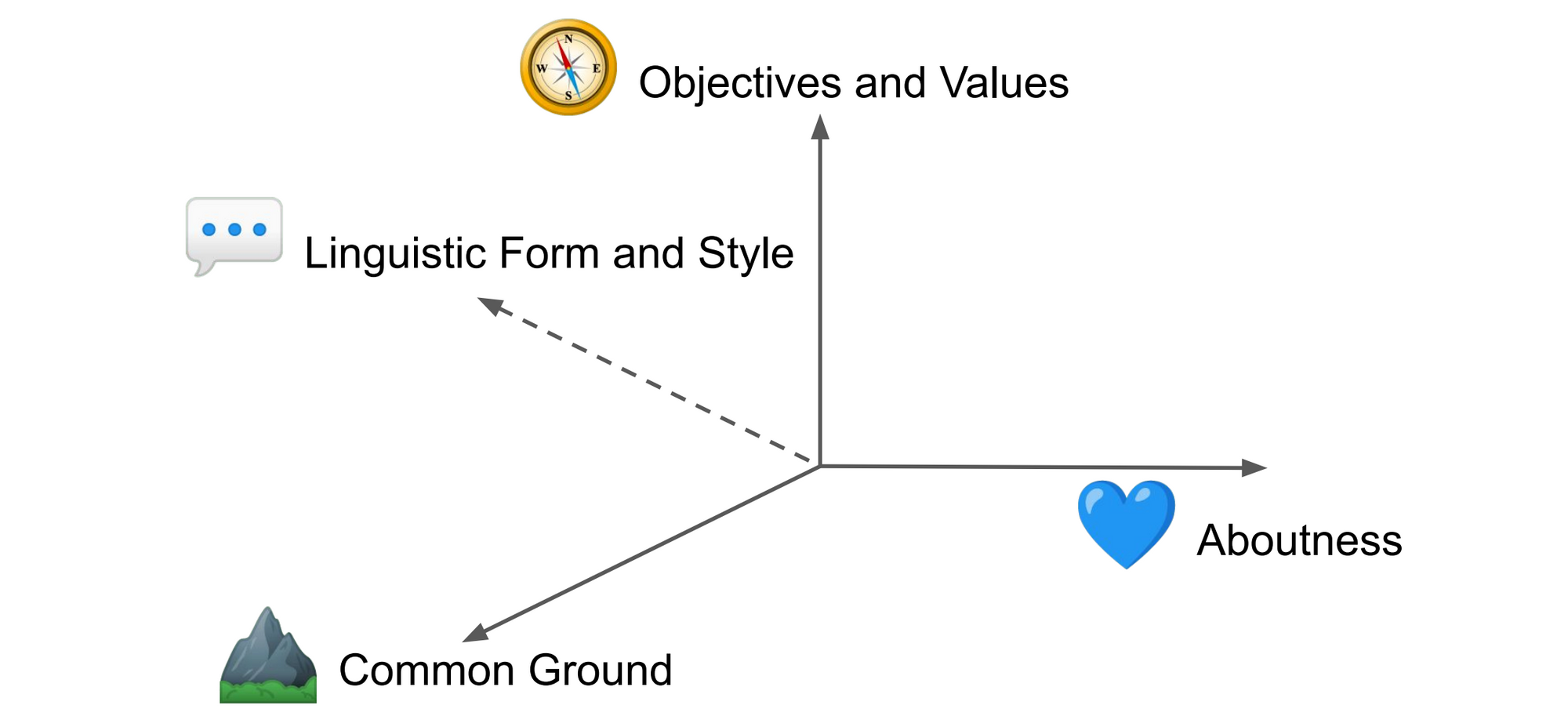

Languages naturally don’t solely differ of their linguistic kind but additionally of their tradition, which incorporates the shared information, values, and objectives of audio system, amongst different issues. Hershcovich et al. present an ideal overview of what’s vital for cross-cultural NLP. A specific type of cultural-specific information pertains to time and temporal expressions resembling morning, which can confer with completely different hours in numerous languages (Schwartz).

Listed here are a few of my favourite papers, past those already talked about above:

- In the direction of Afrocentric NLP for African Languages: The place We Are and The place We Can Go (Adebara & Abdul-Mageed). This paper discusses challenges of NLP for African languages and makes sensible suggestions on the right way to deal with every. It highlights each linguistic phenomena (dealing with tone, vowel concord, and serial verb constructions) and different challenges on the continent (low literacy, non-standardized orthographies, lack of language use in official contexts).

- High quality at a Look: An Audit of Internet-Crawled Multilingual Datasets (Kreutzer et al.). I wrote about this paper when it first got here out. It conducts a cautious audit of large-scale multilingual datasets protecting 70 languages and identifies many information high quality points which have beforehand gone unnoticed. It highlights that many low-resource language datasets have low high quality and that some datasets are even utterly mislabeled.

- Multi Activity Studying For Zero Shot Efficiency Prediction of Multilingual Fashions (Ahuja et al.). We want to know the way effectively a mannequin does if we apply it to information in a brand new language, which might inform what number of examples we have to annotate. This paper makes efficiency prediction extra strong by collectively studying to foretell efficiency throughout a number of duties. This additionally allows an evaluation of options that have an effect on zero-shot switch throughout all duties.

I had the possibility to collaborate on a few papers on this area:

- One Nation, 700+ Languages: NLP Challenges for Underrepresented Languages and Dialects in Indonesia (Aji et al.). We offer an summary of NLP challenges for Indonesia’s 700+ languages (Indonesia is the world’s second most linguistically numerous nation). Amongst these are dialectal and elegance variations, code-mixing, and orthographic variation. We make sensible suggestions resembling documenting dialect, fashion, and register info in datasets.

- Increasing Pretrained Fashions to Hundreds Extra Languages through Lexicon-based Adaptation (Wang et al.). We analyze completely different methods utilizing bilingual lexicons (which can be found in round 5000 languages) to synthesize information for coaching fashions for terribly low-resource languages and the way such information may be mixed with current information, if out there. Particularly, we discover that this works significantly better than translation (because the efficiency of an NMT mannequin for such languages is usually poor).

- Sq. One Bias in NLP: In the direction of a Multi-Dimensional Exploration of the Analysis Manifold (Ruder et al.). We determine the present prototypical NLP experiment (the “sq. one”) and assess the scale alongside which NLP papers contribute that transcend this prototype by annotating the 461 ACL 2021 oral papers. We discover that just about 70% of papers consider solely in English and nearly 40% of papers solely consider efficiency. Solely 6.3% of papers consider the bias or equity of a technique and solely 6.1% of papers are “multi-dimensional”, i.e., they make a contribution alongside two or extra of our investigated dimensions.

Prompting

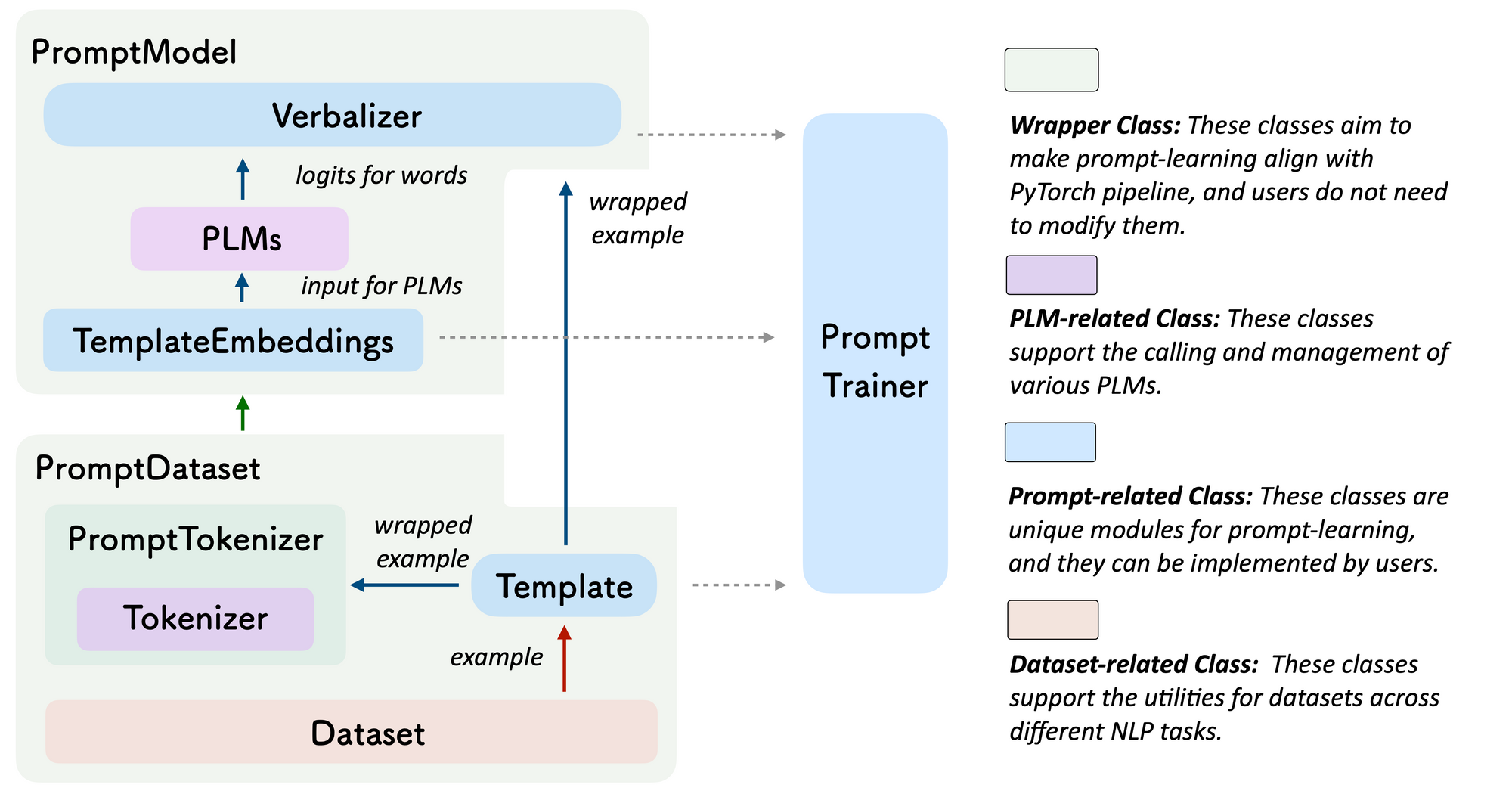

Prompting was one other space that obtained loads of consideration. The finest demo paper was OpenPrompt, an open-source framework for studying with prompts that enables to simply outline templates and verbalizers and to mix them with pre-trained fashions.

A typical thread of analysis was to include exterior information into studying. Hu et al. suggest to develop the verbalizer with phrases from a information base. Liu et al. use an LM to generate related information statements in a few-shot setting. A second LM then makes use of these to reply commonsense questions. We will additionally incorporate further information by modifying the coaching information, e.g., by inserting metadata strings (e.g., entity sorts and descriptions) after entities (Arora et al.).

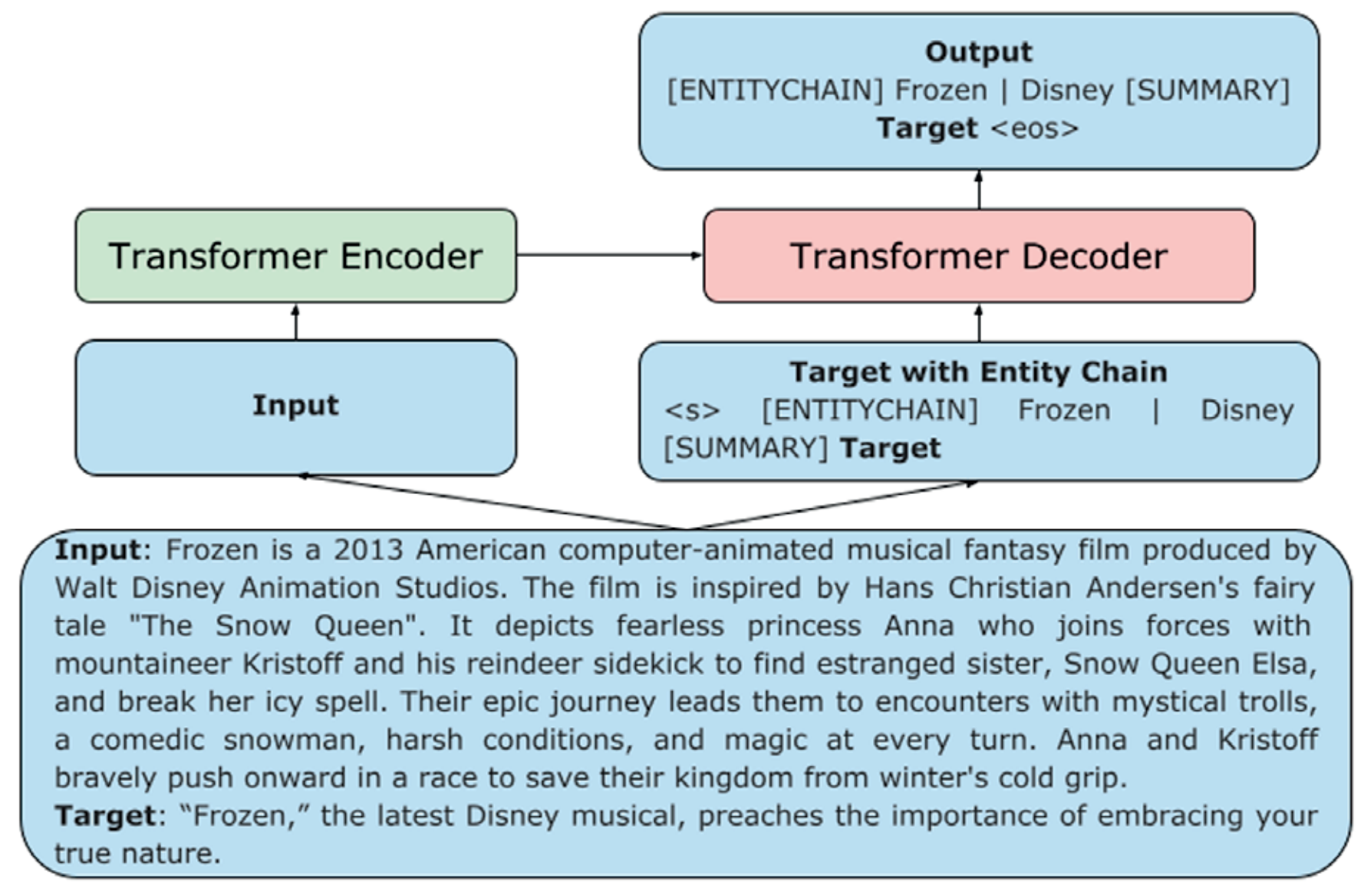

Different papers proposed prompts notably suited to particular functions. Reif et al. suggest to supply a mannequin with examples of a number of types for fashion switch whereas Tabasi et al. evaluate independently obtained embeddings of [MASK] tokens utilizing a similarity operate for semantic similarity duties. Narayan et al. steer a summarization mannequin by coaching it to foretell a sequence of entities earlier than the goal abstract (e.g., “[ENTITYCHAIN] Frozen | Disney“). Schick et al. immediate a mannequin with questions containing an attribute (e.g., “Does the above textual content comprise a menace?”) to diagnose if a mannequin’s generated textual content is offensive. Ben-David et al. generate the area identify and domain-related options as a immediate for area adaptation.

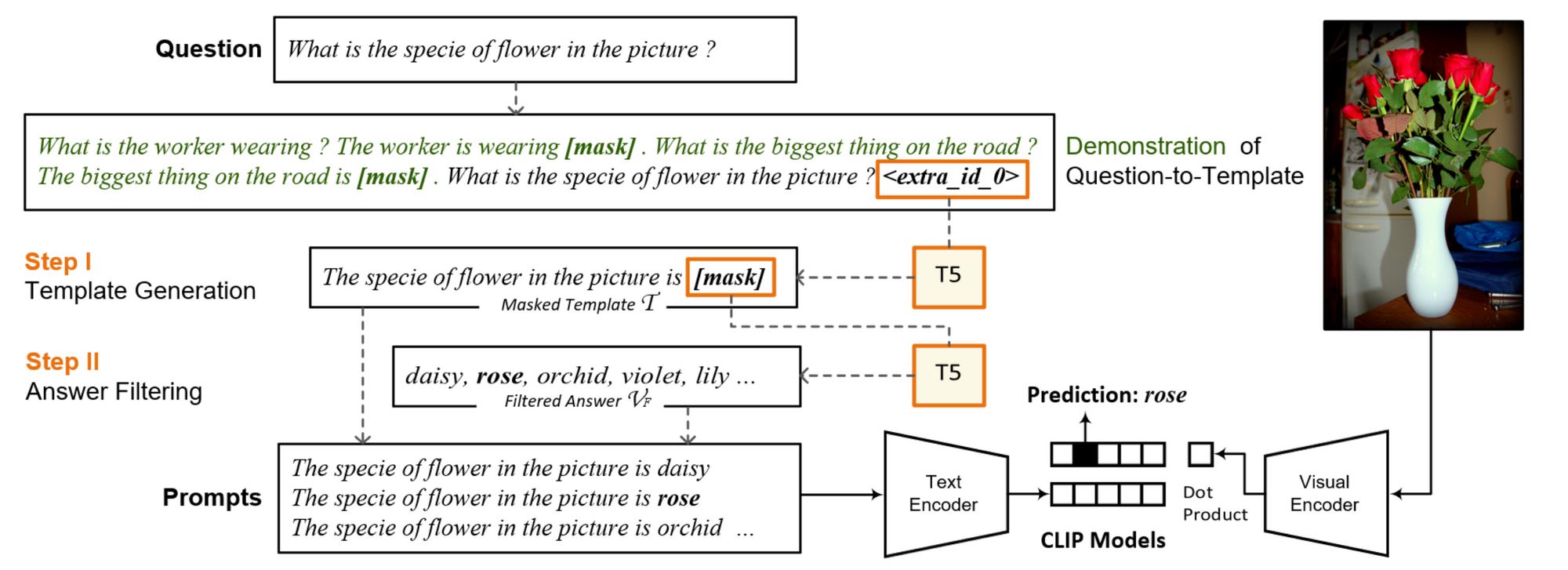

Prompting in a multimodal setting additionally obtained some consideration. Jin et al. analyze the impact of numerous prompts in a few-shot setting. Music et al. examine prompting for vision-and-language few-shot studying with CLIP. They generate prompts primarily based on VQA questions utilizing T5 and filter out unattainable solutions with the LM. Prompts are then paired with the goal picture and used to calculate image-text alignment scores with CLIP.

Lastly, there have been a number of papers looking for to acquire a greater understanding of prompting. Mishra et al. discover other ways of reformulating directions resembling decomposing a fancy activity into a number of easier duties or itemizing directions. Lu et al. analyze the sensitivity of fashions to the order of few-shot examples. As one of the best permutation can’t be recognized with out further growth information, they generate an artificial dev set utilizing the LM itself and decide one of the best instance order through entropy.

The next papers on which I collaborated relate to few-shot studying:

- FewNLU: Benchmarking State-of-the-Artwork Strategies for Few-Shot Pure Language Understanding (Zheng et al.). We introduce an analysis framework to make few-shot analysis extra dependable, together with a brand new information cut up technique. We re-evaluate state-of-the-art few-shot studying strategies beneath this framework. We observe that absolutely the and relative efficiency of some strategies was overestimated and that enhancements of some strategies lower with a bigger pre-trained mannequin, amongst different issues.

- Memorisation versus Generalisation in Pre-trained Language Fashions (Tänzer et al.). We research the memorisation and generalisation behaviour of state-of-the-art pre-trained fashions. We observe that present fashions are resistant even to excessive levels of label noise and that coaching may be separated into three distinct phases. We additionally observe that pre-trained fashions neglect drastically lower than non-pre-trained fashions. Lastly, we suggest an extension to make fashions extra resilient to low-frequency patterns.

Subsequent Massive Concepts

One in all my favourite periods of the convention was the Subsequent Massive Concepts session, a brand new format pioneered by the convention organizers. The session featured senior researchers offering opinionated takes on vital analysis instructions.

Two themes caught out for me throughout this session: construction and modularity. Researchers pressured the necessity for extracting and representing structured info resembling relations, occasions, and narratives. Additionally they emphasised the significance of placing thought into how these are represented—by means of human definitions and the design of acceptable schemas. Many subjects required coping with a number of interdependent duties, whether or not for story understanding, reasoning, or schema studying. This may require a number of fashions or parts interfacing with one another. If you wish to study extra about modular approaches, we will probably be instructing a tutorial on modular and parameter-efficient fine-tuning for NLP fashions at EMNLP 2022. As an entire, these analysis proposals sketched a compelling imaginative and prescient of NLP fashions extracting, representing, and reasoning with complicated information in a structured, multi-agent method.

Heng Ji began off the session with a passionate plea for extra construction in NLP fashions. She emphasised transferring in direction of corpus-level IE (from present sentence-level and document-level IE) and famous the extraction of relations and constructions from different kinds of textual content resembling scientific articles and relation and occasion extraction for low-resource languages. Within the multimodal setting, photographs and movies may be transformed into visible tokens, organized into constructions, and described with structured templates. Extracted constructions may be additional generalized into patterns and occasion schemas. We will symbolize construction by embedding it in pre-trained fashions, encoding it through a graph neural community or through world constraints.

Mirella Lapata mentioned tales and why we must always take note of them. Tales have form, construction, and recurrent themes and are on the coronary heart of NLU. They’re additionally related for a lot of sensible functions resembling query answering and summarization. To course of tales, we have to do semi-supervised studying and practice fashions that may course of very lengthy inputs and take care of a number of, interdependent duties (resembling modeling characters, occasions, temporality, and many others). This requires modular fashions in addition to together with people within the loop.

Dan Roth pressured the significance of reasoning for making choices primarily based on NLU. In gentle of the various set of reasoning processes, this requires a number of interdependent fashions and a planning course of that determines what modules are related. We additionally want to have the ability to motive about time and different portions. To this finish, we want to have the ability to extract, contextualize, and scope related info and supply explanations for the reasoning course of. To oversee fashions, we are able to use incidental supervision resembling comparable texts (Roth, 2017).

Thamar Solorio mentioned the right way to serve the half of the world’s inhabitants that’s multilingual and regularly employs code-switching. In distinction, present language know-how primarily caters to monolingual audio system. Casual settings the place code-switching is usually used have gotten more and more related resembling within the context of chatbots, voice assistants, and social media. She famous challenges resembling restricted assets, “noise” in conversational information, and points with transliterated information. We additionally have to determine related makes use of as code-switching will not be related in all NLP situations. Finally, “we want language fashions which are consultant of the particular methods wherein folks use language” (Dingemanse & Liesenfeld). For extra on code-switching, try this glorious ACL 2021 survey.

Marco Baroni centered on modularity. He laid out a analysis imaginative and prescient the place frozen pre-trained networks autonomously work together by interfacing with one another to unravel new duties collectively. He instructed that fashions ought to talk by means of a realized interface protocol that’s simply generalizable.

Eduard Hovy urged us to rediscover the necessity for illustration and information. When information is uncommon or by no means seems within the coaching information resembling implicit information, it isn’t realized by our fashions. To fill these gaps, we have to outline the set of human objectives that we care about and schemas that seize what was not stated or what’s going to be stated. This requires evolving schema studying to a set of inter-related schemas resembling schemas of affected person, epidemiologist, and pathogen within the context of a pandemic. Equally, to seize roles of individuals in teams, we want human definitions and steering. General, he inspired the neighborhood to place thought into constructing topologies that may be realized by fashions.

Lastly, Cling Li emphasised the necessity for symbolic reasoning. He instructed a neuro-symbolic structure for NLU that mixes analogical reasoning through a pre-trained mannequin and logical reasoning through symbolic parts.

Along with the Subsequent Massive Concepts session, the convention additionally featured highlight talks by early-career researchers. I had the consideration to talk subsequent to superb younger researchers resembling Eunsol Choi, Diyi Yang, Ryan Cotterell, and Swabha Swayamdipta. I hope that future conferences will proceed with these codecs and experiment with others as they create a recent perspective and allow a broader view of analysis.

Favourite Papers

Lastly, listed here are a few of my favourite papers on much less mainstream subjects. Fairly a number of of those are in TACL, highlighting the usefulness of TACL as a venue for publishing nuanced analysis. Many of those additionally emphasize the human facet of NLP:

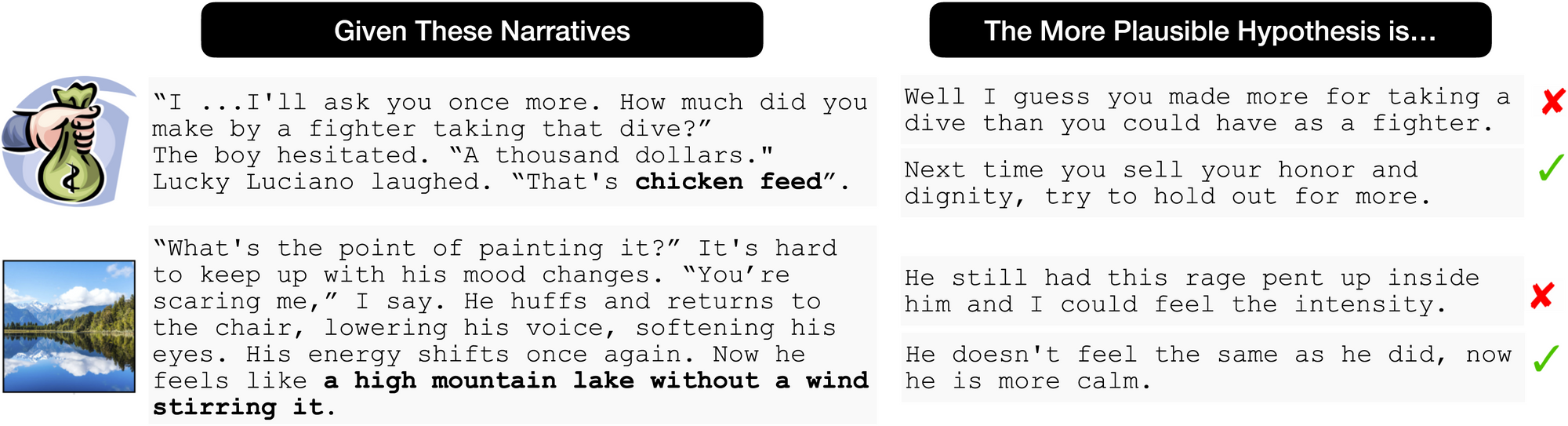

- It’s not Rocket Science: Deciphering Figurative Language in Narratives (Chakrabarty et al.). This paper focuses on understanding figurative language (idioms and similes). They consider whether or not fashions can interpret such figurative expressions by framing the duty in an LM setting: they generate believable and implausible continuations utilizing crowd staff, which depend on the right interpretation of the expression. They discover that state-of-the-art fashions carry out poorly on this activity however efficiency may be improved by offering further context or knowledge-based inferences (e.g., “The narrator sweats from nerves”, “Run is used for train”) as enter.

- Phrase Acquisition in Neural Language Fashions (Chang & Bergen). This paper investigates when particular person phrases are acquired throughout coaching in neural fashions, in comparison with phrase acquisition in people. They discover that LMs rely much more on phrase frequency than youngsters (who rely extra on interplay and sensorimotor expertise). Like youngsters, LMs additionally exhibit slower studying of phrases in longer utterances. Early in coaching, fashions predict primarily based on unigram token frequencies, in a while bigram chances, and ultimately converge to extra nuanced predictions.

- The Ethical Debater: A Research on the Computational Technology of Morally Framed Arguments (Alshomary et al.). This paper research the automated era of morally framed arguments. The system takes a controversial subject (e.g., globalization), a stance (e.g., professional), and a set of morals (e.g., loyalty, authority, and purity) as enter, retrieves and filters related texts primarily based on the morals, and phrases an argument. They make use of a Reddit dataset annotated with points (e.g., respect, obedience), which they map to morals. They consider the effectiveness of morally framed arguments in a person research with liberals and conservatives.

- Human Language Modeling (Soni et al.). This paper extends the language modeling activity with a dependence on a human state wherein the textual content was generated. To this finish, they course of all utterances of a person sequentially and situation a Transformer’s self-attention on a recurrently computed person state. The mannequin is pre-trained on Fb posts and tweets with person info the place it achieves a dramatic discount in perplexity and enhancements on downstream stance and sentiment duties.

- Inducing Constructive Views with Textual content Reframing (Ziems et al.). This paper introduces the duty of constructive reframing, which neutralizes a damaging viewpoint and generates a extra constructive perspective with out contradicting the that means (e.g., “I completely hate making choices” → “It’ll grow to be simpler as soon as I begin to get used to it”). They create a brand new dataset for this activity, annotated with completely different reframing methods. General, the duty is an attention-grabbing and difficult utility of fashion switch—and who will not be in want of constructive reframing generally?

The Darkish Matter of Language and Intelligence

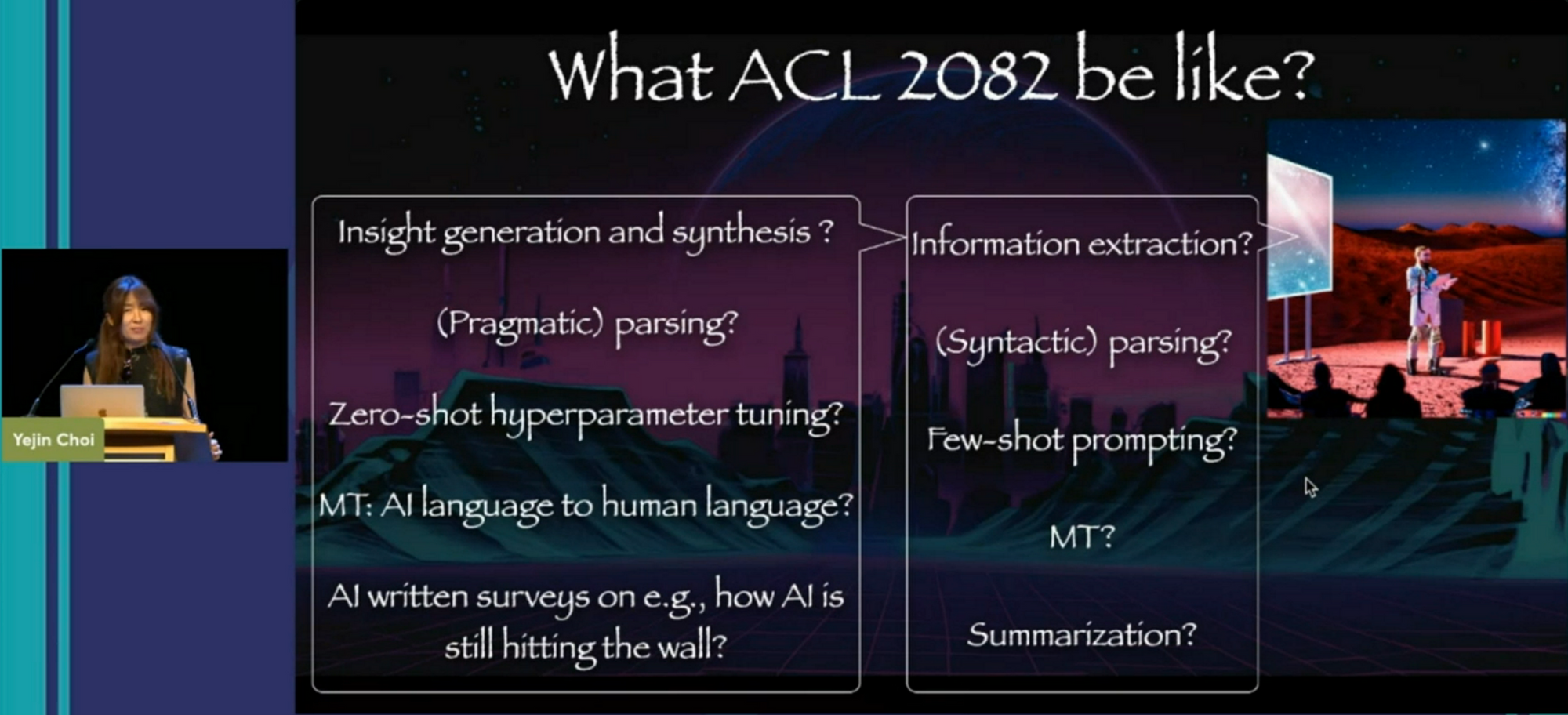

Yejin Choi gave an inspiring keynote. Amongst different issues, it’s the first discuss I’ve seen that makes use of DALL-E 2 for illustrating slides. She highlighted three vital analysis areas in NLP by drawing analogies to physics: ambiguity, reasoning, and implicit info.

In trendy physics, a higher understanding typically results in elevated ambiguity (see, for example, Schrödinger’s cat or the wave–particle duality). Yejin equally inspired the ACL neighborhood to embrace ambiguity. Previously, there was stress to not work on duties that didn’t obtain excessive inter-annotator settlement; equally, in conventional sentiment evaluation, the impartial class is usually discarded. Understanding can’t simply be crammed into easy classes. Annotator opinions bias language fashions (Sap et al., 2021) and ambiguous examples enhance generalization (Swayamdipta et al., 2020).

Comparable in spirit to the notion of spacetime, Yejin argued that language, information, and reasoning are additionally not separate areas however exist on a continuum. Reasoning strategies resembling maieutic prompting (Jung et al., 2022) enable us to research the continuum of a mannequin’s information by recursively producing explanations.

Lastly, analogous to the central function of darkish matter in trendy physics, future analysis in NLP ought to give attention to the “darkish matter” of language, the unstated guidelines of how the world works, which affect the best way folks use language. We should always aspire to attempt to educate our fashions resembling tacit guidelines, values, and targets (Jiang et al., 2021).

Yejin concluded her discuss with a candid take of things that led to her success: being humble, studying from others, taking dangers; but additionally being fortunate and dealing in an inclusive surroundings.

Hybrid convention expertise

I personally actually loved the in-person convention expertise. There was a strict masks carrying requirement, which everybody adhered to and which didn’t in any other case impede the circulation of the convention. The one points had been some technical issues that occurred throughout plenary and keynote talks.

However, I discovered it tough to reconcile the in-person with the digital convention expertise. Digital poster periods overlapped with breakfast or dinner, which made attending them tough. From what I’ve heard, many digital poster periods had been nearly empty. It appears we have to rethink the right way to conduct digital poster periods in a hybrid setting. Instead, it could be simpler to create asynchronous per-poster chat rooms in rocket.chat or an analogous platform, with the power to arrange impromptu video requires deeper conversations.

The expertise was higher for oral shows and workshops, which had an affordable variety of digital individuals. I notably admire having the ability to rewatch the recordings of keynotes and different invited talks.

General, we nonetheless have some strategy to go to create an ideal expertise for digital individuals. Nevertheless, it was a blast having the ability to work together with folks in-person once more. Because of all of the organizers, volunteers, and to the neighborhood for placing collectively an ideal convention!